A set of simple yet useful Python functions to expedite the execution of common routines from your Deep Learning training/testing pipelines. I found myself using some version of these functions repeatedly over time, reason why I decided to make them generic and to share them publicly.

Last update: July 13th, 2021

Requirements: PyTorch 1.3.1, Opencv-python 4.2.0.

I believe that different versions (in particular of OpenCV) might create problems requiring small modifications in some of the functions used.

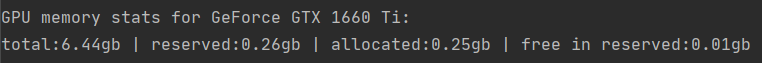

Description: reports your GPU ID and memory usage.

Usage: use it in the beginning of each epoch of a training routine for improved memory monitoring. Employs PyTorch-based functionalities.

Sample:

Description: calculates the pixel-level intersection-over-union (IoU) between a prediction image and a ground truth mask.

Usage: instance and semantic segmentation frameworks perform pixel-level predictions for two or more classes. Use this function to compare such predictions against a ground-truth mask. Note that each class' prediction and their respective masks have to be parsed individually.

Sample usage

Description: calculates the mean squared error (mse) between two images.

Usage: Useful (in particular in combination with SSIM) when evaluating the results of reconstruction/denoising/enhancing methods against a ground truth reference.

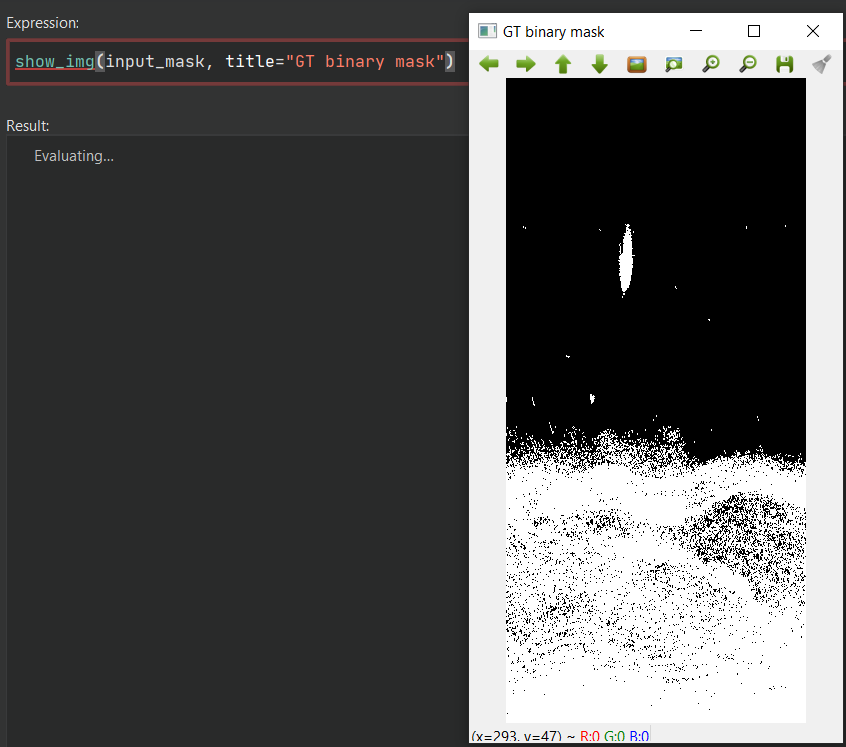

Description: Catch-all printing function for images of different formats and containers (e.g., ndarray, Tensor) using OpenCV.

Usage: given the images' different dataformats, ranges and containers we often work with when training vision systems, I created a function that could be generically used to try and plot the content of most images.

Sample:

Description: filters detectron2's bounding-box-based detections.

Usage: if you work with object detection frameworks offered by detectron2, this function can help you filter their detection results based on desired classes and detection thresholds, as well as valid y-axis range (so that you can ignore detections from certain parts of the image).

Description: concatenates outputs composed by multiple bounding boxes.

Usage: use when desiring to combine multiple overlapping bounding boxes (often seen in the output of object detector even after NMS). Note that the concatenation result is given by the score and class of the highest-scoring detection; thus the use of this function is recommend when only one class is presented, or when it is reasonable to merge detections of distinct classes.

Sample usage

Description: plots all the detection bounding boxes found in a given image.

Usage: useful when debugging the multiple detections of an object detector. Detections of different classes can be distinguished with the use of different colours.

Sample usage

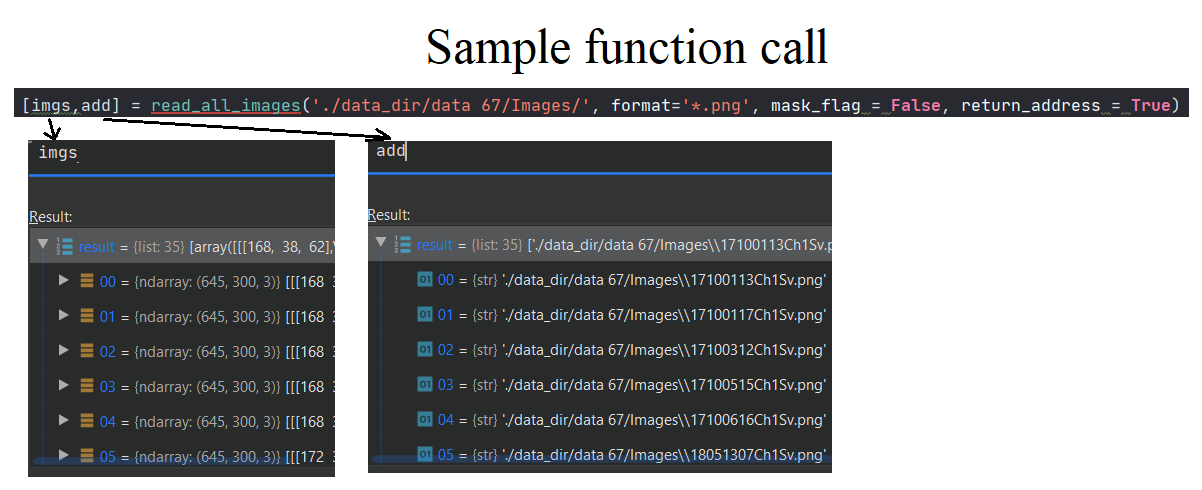

Description: reads all image files of a given format from a folder.

Usage: as an important step of dataloading operations, this function can easily read all images from a directory given a specific format.

Sample:

Description: check if a directory exists and creates it if it doesn't.

Usage: use it to create output directories for different experiments, among others.

Description: creates a text file with generic metadata from DL models.

Usage: given the large number of hyperparameters involved in DL-based training/testing, I created this function to organize the most commonly-used pieces of metadata under the same text file.

Sample usage

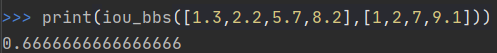

Description: Returns the IoU between two bounding boxes (third party by Adrian Rosebrock, 2016).

Usage: IoU is a key metric when post-processing and evaluating object detection and instance segmentation systems. This function calculates the IoU between a pair of bounding boxes in this context.

Sample: