A c++ program that takes input a .c or .cpp program file and writes the tokens present in it in a file. It also creates a symbol table for the program.

- Lexical Analysis is the process of converting a sequence of characters into a sequence of Lexical Tokens

- A program that performs lexical analysis is termed as Lexical Analyzer

C++ 11 or higher to compile the code Unix users can run the following command to install latest version of C++ compiler

sudo apt-get install g++-

cdto this project -

Compile with C++ 11

- Method 1: Run the following command in terminal

g++ -std=c++11 FSM.cpp -o FSM - Method 2: Run

makecommand if Makefile is supported in your system

- Method 1: Run the following command in terminal

-

Run the executable with input the input file as follows

./FSM < inputProgram

- Following two files will be generated:

pa_1.outThis file contains description of tokens in the following format<token> <token_id>symbol_table_1.outThis file contains identifiers and keywords in the following format<token> 1if token is an identifier<token> 0if token is a keyword The tokens are identified as defined indef.hfile

- The input file is read character by character and stored in buffer string.

- The string is preprocessed using

removeComments()routine to remove comments enclosed between/*and*/. - The

lexicalAnalysisis function then performs the tokenization. - We make use of

lexemeBeginandforwardPtrwhich keeps track of the index we have to start scannnig from and the end index of token we are currently considering respectively. - In each iteration, we find the maximum length token we can get that starts from index pointed by

lexemeBegin. We skip the iteration if the character pointed bylexemeBeginis a white space, which is checked usingisWhiteSpace()routine. - If a token is found, we write it in the required format in the

pa_1.outfile and update thesymbol_table_1.outif the token found is an identifer or keyword. - If no token is found, then we

exit(1)denoting a failure in analysing the code.

int isKeyword(const string&);

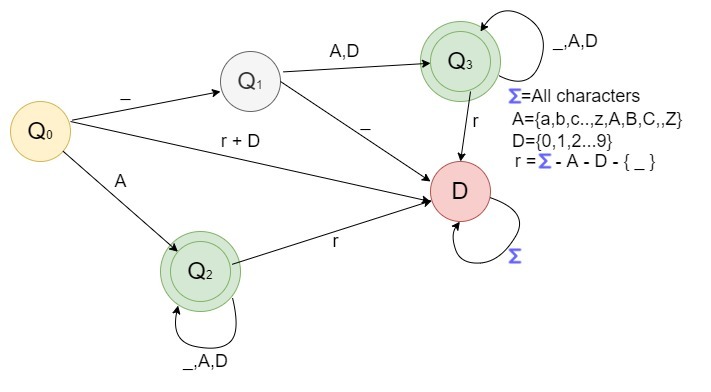

int isIdentifier(const string&);

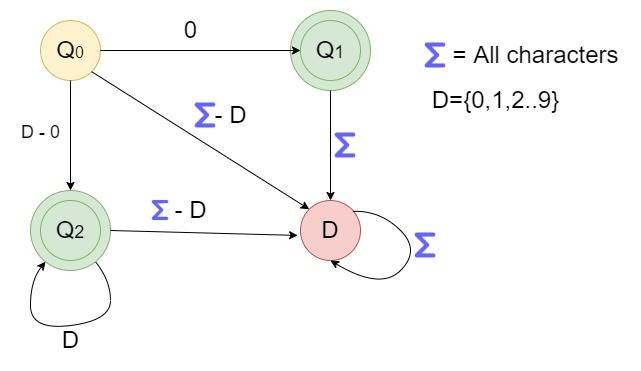

int isIntegerConstant(const string&);

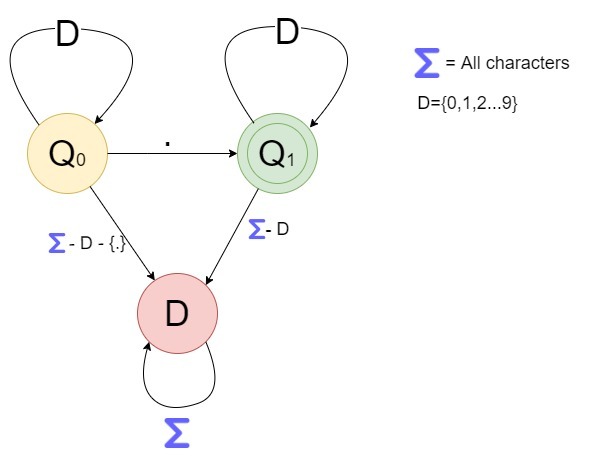

int isFloatingConstant(const string&);

int isArithmeticOperator(const string&);

int isAssignmentOperator(const string&);

int isRelationalOperator(const string&);

int isSpecialSymbol(const string&);

int matchToken(int, int); // returns token_id if token is found in the substring [L,R] else returns -1

bool isWhiteSpace(char);

void lexicalAnalysis();

void removeComments();All the functions are self-explanatory.

For the given input.in file, the analyzer generates following two files

symbol_table_1.out

int 0

main 1

x 1

i 1

ans 1

for 0

return 0

n 1pa_1.out

423 int

300 main

418 (

419 )

416 {

423 int

300 x

311 =

301 4

420 ,

300 i

311 =

301 0

420 ,

300 ans

311 =

301 0

421 ;

430 for

418 (

300 i

311 =

301 0

421 ;

300 i

307 <

300 x

421 ;

300 i

304 ++

419 )

300 ans

316 +=

301 4

421 ;

433 return

300 ans

421 ;

417 }

430 for

418 (

423 int

300 i

311 =

301 0

421 ;

300 i

307 <

300 n

421 ;

300 i

304 ++

419 )- Sumit Kumar Prajapati (B20CS074) - prajapati.3@iitj.ac.in

- Pratul Singh (B20CS095) - singh.142@iitj.ac.in