-

Notifications

You must be signed in to change notification settings - Fork 5

Architecture Overview

The MobiledgeX software platform is a distributed set of microservices that allow operators to manage their cloudlet infrastructure, and developers to deploy applications across those cloudlets. This document describes what those services are, how they are distributed, what functionality they provide, and how they communicate with each other. It is intended as a broad introduction to the entire software platform, and touches lightly on all areas, but avoids diving deep into any single area.

This document assumes the reader is already familiar with the features offered by the MobiledgeX platform as presented in the features documentation, as well as terminology used in the context of the MobiledgeX platform. This document does not discuss how particular features are implemented.

For historical purposes, it may be useful to be aware of the following design principles we adhere to when developing the MobiledgeX platform.

Persistent state is relegated to existing production quality databases, while compute microservices remain stateless (but may cache state). This allows compute microservices to scale easily while leveraging mature existing solutions for persistent data storage and backup.

While leveraging standard protocols like Protocol Buffers, gRPC, and REST/JSON, keep communication under our control and common between all our services. This allows us to control how distributed services interact with each other, and program in monitoring, logging, and debugging.

Separate microservices offer the advantage of a clear separation of data, memory, locking, and functionality via a well-defined API. To avoid the disadvantage of increased complexity when implementing and tracing processes distributed across a number of different microservices, aim to split functionality into microservices only when there is a clear reason to do so due to locality, functional layering, or separation of data.

The MobiledgeX platform is written almost entirely in golang, leveraging Protocol Buffers and gRPC, and using common technologies like JSON, REST, and TLS. Production-level open source code and projects are used wherever possible, especially for third-party services like databases. Ansible along with Chef are used for deploying the platform services.

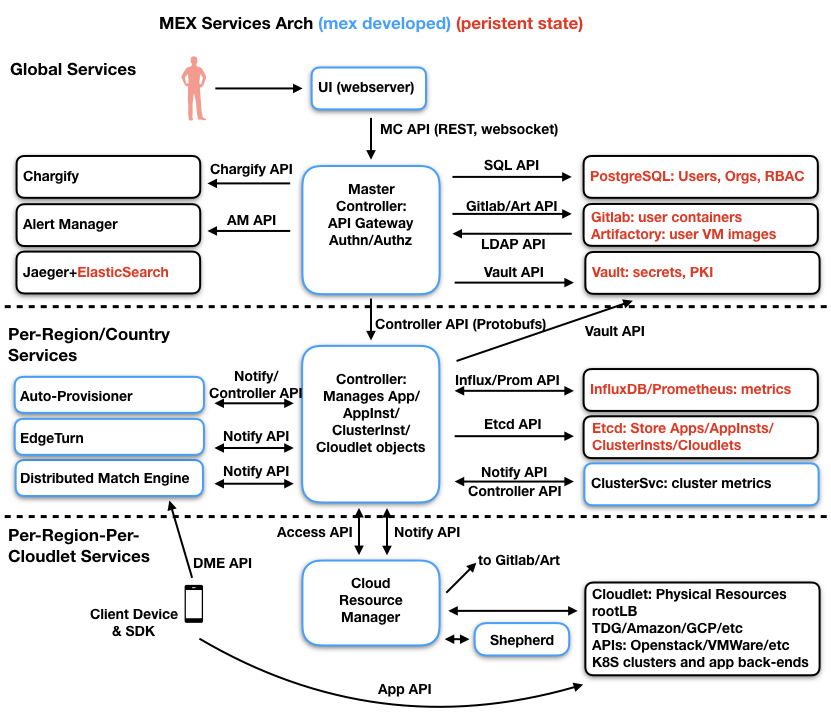

As seen in the above architecture diagram, the MobiledgeX platform is divided into three tiers, a global tier, a per-region or per-country tier, and a per-cloudlet tier. Separating services into three tiers allows for easy scaling, separation of data and privacy, and better security.

Blue boxes in the diagram are services developed by MobiledgeX, while black boxes represent third party or open-source software. Red text indicates a service that provides persistent storage. All MobiledgeX services are horizontally scalable unless otherwise specified.

The web server provides a graphical user interface (GUI) to the user. There is no platform logic in the webserver, but it arranges and organizes data in a way that makes it easy for the user to view and manage their data. The web server uses the Master Controller’s REST API to talk to the Master Controller.

The Master Controller (MC) serves as the user’s central entry point into the MobiledgeX platform. It performs user authentication, authorization (via role-based access control), and forwards requests to the regional Controllers. The MC API is a simple REST (JSON) based API, which is accessible directly by the user, used by the UI web server, and used by a command line tool called mcctl.

User account data is stored in a standard PostgreSQL database that has its own redundancy and back-up schemes. Object data like applications, clusters, and cloudlets are not stored at the global tier, they are stored at the regional tier in Etcd.

User’s container and VM images are stored in Gitlab and Artifactory. These images are then cached on cloudlets that want to deploy them as user Applications. Users upload images directly to Gitlab and Artifactory, but otherwise do not interact with them directly. Account verification is via an LDAP service provided by the MC. Compiled SDK libraries are also publicly available from Artifactory.

Vault is used for secret storage. Vault stores all data encrypted, with various different roles for different levels of data access. Vault also provides TLS certificates for mutual TLS authentication between services. This is explained in detail in the Security section.

The MobiledgeX admin registers new regional Controllers with the MC, providing the http address of the region’s Controller cluster. After being registered, the MC can expose the region to the user for Application deployment.

Alerts that are generated at the MC, or are pushed up from the regional Controllers, are then forwarded to the Prometheus Alert Manager which then handles sending emails, Slack messages, or Pagerduty messages to the user. The alert manager configuration files are handled by a small alertmanager sidecar service.

Billing events recorded at the Controller are inserted into InfluxDB, which the MC then pulls and converts to Chargify APIs to push to Chargify. Chargify then generates and sends a billing invoice to the user. Unlike other 3rd party services shown here, Chargify is a cloud service and not a MobiledgeX deployed service.

Jaeger and ElasticSearch run globally for internal logging and tracing, and all services can push directly to Jaeger. These are discussed further under the Logging and Debugging section.

Each region has a set of regional services. A region may comprise a geographical area (like the European Union), or a country (like Germany), a section of a country (like United States West), or just a single operator, depending on the number of cloudlets, data privacy requirements, etc. Application data for that region is stored and stays in that region, in order to comply with any regional data privacy and security requirements.

The Controller manages all application, cluster, and cloudlet data. It uses Protocol Buffers to define objects and gRPC to expose an API that allows the caller to create, read, delete, and update (CRUD) all the objects it manages. It manages all the inter-object dependencies, where objects include app definitions, app instances, cluster instances, cloudlets, and various policies. The MC acts as a client, calling the Controller’s gRPC APIs on behalf of the user. The Controller has no authorization and relies on the MC for that.

All object data is stored in a local Etcd cluster, which uses 3 nodes for redundancy. Only the Controller talks directly to Etcd.

Metrics are stored in an Influx time-series database.

The Distributed Matching Engine handles requests from client devices, including finding the most appropriate application back-end for the client to connect to. Often these requests come from a MobiledgeX SDK integrated into the client, but may also come directly from the client. Once the client has received the best back-end, it can connect directly to the back-end running on a cloudlet.

The DME also may provide operator-specific functionality, such as location verification. When the DME provides operator-specific functionality, for security it resides in the cloudlet tier on the operator’s infrastructure, instead of at the regional tier.

The Auto-Provisioner service handles automatically deploying user applications based on user-defined policies and monitoring of metrics and events. It layers on top of the Controller by calling the same Controller APIs that the MC uses to deploy applications.

The Cluster-SVC service deploys MobiledgeX sidecar apps to Kubernetes clusters. These sidecar apps manage metrics and storage. It layers on top of the Controller by calling the same Controller APIs that the MC uses to deploy applications.

EdgeTURN is a customized TURN server that handles unified shell or console access to user containers and VMs running on the cloudlets. This allows users to connect to their application back-ends and enable live debugging.

Unlike global and regional services which typically run in the public cloud, cloudlet services run locally within the cloudlet infrastructure. Cloudlet services manage infrastructure-specific APIs to deploy and manage applications on the infrastructure, monitor health, and export metrics. Note that infrastructure here refers to infrastructure management platforms like Openstack, VMWare, GCP, Azure, etc, and not direct management of hardware infrastructure.

Cloudlet services do not scale horizontally, with redundancy being handled across cloudlets and with cloudlet service restart policies.

The Cloudlet Resource Manager connects to the regional Controller and receives information about what applications should be deployed on the cloudlet. It converts normalized infrastructure independent Controller data into infrastructure specific APIs to deploy user container and VM back-ends. The CRM itself uses a plugin-based model and interface to allow it to easily implement new target infrastructures. Currently implemented infrastructures include Openstack, VMWare vSphere/VCD, GCP, Azure, AWS, and Google Anthos among others.

The Shepherd service manages all metrics and alerts monitoring, collection, and exporting on the cloudlet. It leverages other software such as Prometheus to handle most of the per-Cluster metrics collection, alerts, and health monitoring. These metrics are stored in InfluxDB.

Defined at the MC level, user account data includes users, roles, organizations, RBAC tables, and CloudletPool invitations and responses, user API keys, among others. These are defined in golang and are designed to be stored in the PostgreSQL database, and are managed solely by the MC. This data never traverses into the regional tier.

Defined at the regional level, user object data includes app definitions, app instances, cluster instances, cloudlets, auto-provision policies, auto-scale policies, trust policies, cloudlet pools, etc. These are defined as Protocol Buffer objects, and leverage both the standard protobuf code generator and a plethora of custom generators for generating notify communication code, MC API code, CLI code, unit test and e2e test code, etc. These objects are designed to be stored in the Etcd database. Client Device API Data Defined for the communication between a client device and the DME, these are also Protocol Buffer objects and APIs that allow for a client device to query for the best AppInst back-end, receive updates and events, and access operator-specific APIs. These objects are only used for communication and not stored in persistent storage.

There are two sets of external facing APIs that are exposed publicly. Both are secure endpoints which use server-side public certificates.

The MC APIs allow users to log into the MobiledgeX platform, manage users and RBAC permissions, deploy and manage their applications, and view metrics and events. These APIs are accessible to the user directly as JSON-based REST APIs, or indirectly via the UI web server or mcctl command line utility. These APIs include CRUD APIs for managing both the user account data and the application data, where application data is per region, and any APIs for application data are forwarded to the corresponding regional Controller.

The DME APIs allows client devices to query the MobiledgeX platform to find the best AppInst on a cloudlet to connect to, get update events and notifications, and provide operator-specific location and identity services. These use the client device API data models, and are gRPC APIs, but also support equivalent JSON-based REST APIs.

Communication between MobiledgeX services and 3rd party services are fairly straightforward, using the APIs defined by the 3rd party service to communicate, with the MobiledgeX service as the client and the 3rd party service as the server. Examples of this include: MC to PostgreSQL, Gitlab, Artifactory, Vault, etc Controller to Etcd, InfluxDB, Vault, etc CRM to infrastructure API endpoint (Openstack, VSphere, GCP, etc)

There are only a few exceptions to this. Gitlab/Artifactory treat MC as an LDAP server in order to validate user logins directly to Gitlab/Artifactory, to allow users to upload images directly. For horizontal scaling, both MC to PostgreSQL and Controller to Etcd establish listener/watcher connections respectively, so that all horizontally scaled instances can potentially be updated when a database change is initiated from one of the instances.

Between MobiledgeX services, communication is run primarily on the notify framework. This framework is built on top of the application Protocol Buffer data models, and uses a bidirectional gRPC stream to transport data. It has two purposes, one is to replicate state from one node that is the source of truth to another node, and the second is to send messages.

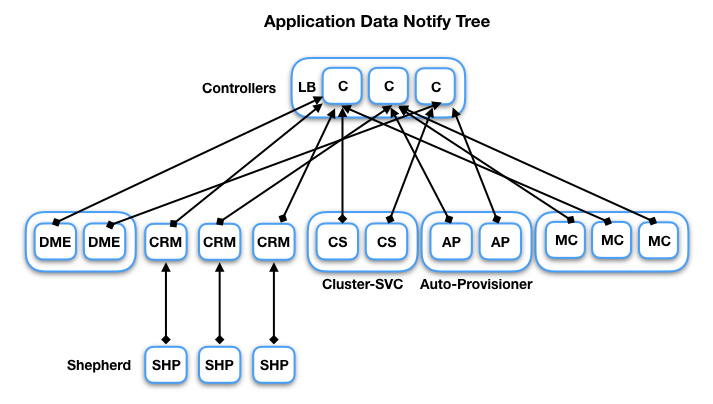

The notify framework is arranged like a tree, with Controllers at the top of the tree. The diagram above shows the full tree with examples of horizontal scaling. The framework itself is agnostic to the number of levels, but currently there are only three levels to the tree. Leaf nodes always know the address of their parent node, so connections are established from child to parent. However, data flow is bidirectional.

The notify tree replicates Application data from the Controller nodes which are the source of truth, down to the child nodes. This data may then be pushed down again, i.e. from CRM to Shepherd. Replicating state allows child nodes to monitor and cache data, and act upon it when appropriate without the parent node needing any logic related to the child node. For example, all the auto-provisioning logic is built into the Auto-Provisioner service. It monitors app data, auto-prov policies, app instances, and cloudlet status. Whenever any of these changes, it figures out if a new AppInst should be deployed or undeployed, and from where. The Controller does not need to call a specific API to initiate this nor even be aware that it’s happening, it just forwards data the same way as it forwards data to other children. The same applies to the CRM for deploying AppInsts, and the Cluster-SVC for deploying sidecar AppInsts.

Data never flows east-west, even between nodes in the same horizontal scaling group. The only exception to this are the Controllers, which keep in sync with each other via synchronizing to Etcd. Data consistency east-west is guaranteed by the same data being pushed to each child by its parent.

The state replication protocol of the notify framework has the following properties: Child nodes tell the parent nodes what object types to send (subscription) Parents push all objects types to children Upon disconnect, child nodes retain the cache of objects Upon reconnect, parent nodes resend the entire object set, and child nodes update or discard based on the changes they missed while disconnected

State replication can also happen from child to parent, where the child is considered the source of truth for the data. In those cases, parents track which child the data comes from, and flush that data if the child connection is lost.

Single-shot messages can also be sent across the framework. For example, these are used to push metrics from Shepherd up to the Controller, which pushes them into InfluxDB.

Only a small portion of the data is replicated to the MC as an optimization to avoid MC querying data from the regional Controllers too often.

When the Master Controller forwards a user’s application data request to the appropriate regional Controller, the Master Controller calls the Controller’s gRPC API and does not use the notify framework.

The cloudlet services, i.e. CRM and Shepherd, have an additional communication path to the Controller beyond the notify framework connection. The cloudlet access key API is used to authenticate to the Controller with a per-cloudlet access key. This communication path is discussed in the Security section.

MobiledgeX supports several device operating systems, providing SDKs that can be integrated with client applications on the device. While the DME APIs can be called directly by the client application, using the SDK provides a native and more seamless integration with the software platform.

Supported SDK platforms are Android, IOS, and Unity.

The Client SDK also implements the persistent connection to the DME API over which the DME can push updates and events to the client. This connection is a gRPC bidirectional stream and is end-to-end TLS encrypted.

Users authenticate via the MC API using a username and password. Login passwords have both a min length and min strength requirement. Admin passwords have a much higher min strength requirement. Passwords are stored one-way hashed and salted, with a high number of iterations, using the PBKDF2 algorithm. Any failed login attempt triggers a 3 second delay penalty. Upon successful login, a JWT token generated by Vault is returned to the client. This token is valid for 24 hours and can then be used to access other authenticated APIs.

Users may also create an API key that has fewer permissions than their user account, and use the API key for automated access.

2-Factor authentication may also be enabled on a per account basis. This requires a temporary one-time password (TOTP) in addition to the password to log in. The TOTP is sent to the user’s email address.

Clients connecting via the DME API can optionally authenticate with the DME by providing a signed message. This message is encrypted via a private key that the client has access to (probably via a direct connection to the developer’s authentication back-end), and is decrypted and verified by the DME using the public key that the developer adds to the MobiledgeX platform during App creation. This authenticates the identity of the application. Authentication of the end user or device is the responsibility of the client application.

Both the MC and DME external API endpoints have configurable rate limiters that can rate limit by aggregate, per IP, per user (for the MC API), or per API (the self-service UserCreate API is heavily limited). There are several different types of rate limiting algorithms that can be configured.

All external facing APIs require server-side TLS, where our servers have a public-CA issued certificate.

Internal communication between our services is always mTLS, where both client and server have certificates issued by an intermediate certificate stored in Vault. The root self-signed certificate is stored in an offline Vault. There are different intermediate certs for global versus regional versus cloudlet tiers. Additionally, non-global certs are tagged with the region name, and non-global services will refuse connections if the region tag does not match.

In cases of our services talking to a 3rd party service, there is always mutual authentication, with the 3rd party server given a public-CA issued cert, and our service as the client authenticates via a key or password that is stored encrypted in Vault.

All sensitive data outside of user passwords is stored in Hashicorp Vault. This includes access credentials for operator infrastructure and public clouds, access credentials for 3rd party services, application specific secrets, etc. Access to this data is limited by roles, and only the Master Controller and Controller have direct access to Vault. All communication to Vault is TLS encrypted. No secrets are ever stored in the regional Etcd.

Vault also acts as the certificate issuer for internal PKI certificates as noted above.

Services running on cloudlet infrastructure are in an environment that is not under the direct control of MobiledgeX, and may be less secure. Therefore they are further restricted by a per-cloudlet access key. This access key is used by the CRM and Shepherd to connect to and authenticate against the Controller, from which they request the Controller to perform certain actions on their behalf that require secret credentials, including:

- Issue or remove DNS entries

- Issue internal PKI certificates to connect via the notify framework (valid for 72 hours)

- Issue Vault signed SSH certificates

- Retrieve cloudlet-specific infrastructure access credentials (Openstack, Vsphere, etc)

- Request docker and VM image registry access credentials

Controllers verify the access key is valid for the given cloudlet, and restrict actions based on the cloudlet key (i.e. one cloudlet cannot access another cloudlet’s access credentials, nor change DNS entries for another cloudlet). cloudlet access keys do not expire, but can be managed via the MC APIs, and can be easily revoked and reissued. The cloudlet access key is stored with restricted permissions on the platform VM running the CRM and Shepherd services.

Within a cloudlet on operator infrastructure, communication between the CRM and various MobiledgeX VMs or nodes running docker or kubernetes clusters are encrypted by SSH. Authentication is handled by Vault signed SSH certificates which have a 72 hour validity. Password based authentication is disabled. For debugging, admins logging into these VMs works the same way, the only difference being the SSH certificate is only valid for 5 minutes.

Scaling is achieved by two parts: one is the division of the architecture into three tiers, and the other is by scaling each service individually. The three tier model allows for dividing the world into separate regions, and dividing the region into cloudlets. All Edge-Cloud services above the cloudlet tier are horizontally scalable to be able to scale with load, and the notify framework is designed with this in mind.

Redundancy is also achieved by horizontal scaling, and potentially separating horizontally scaled services into separate physical locations. For example, the Controllers set could have some on US West and some in US East, as long as the Etcd cluster nodes are also separated but part of the same cluster. For 3rd party services in general, they all have their own redundancy and HA schemes, so we rely on those schemes.

Application back-end redundancy comes primarily from redundancy across cloudlets and the auto-provisioning policy for HA, whereby the system detects unhealthy or offline AppInsts, and automatically spawns new ones on different cloudlets to satisfy the client demand.

The client SDK also has provisions for redundancy by prompting the client app to switch to a better back-end based on the current back-end becoming unhealthy, or a better back-end becoming available due to a new one being deployed or the device moving to a new location.

Distributed systems are notoriously difficult to debug, so logging and debuggability have been a focus from the start.

All services use the same MobiledgeX logging package, which enforces structured logging, and logs both to local disk and to a trace defined by the Opentracing standard and implemented by a Jaeger service backed by ElasticSearch. Associating logs with a trace and pushing these traces to Jaeger and ElasticSearch allow us to see only the logs for a given action, and when and what services participated in that action. There are also a custom set of APIs that allow for searching the ElasticSearch trace data, which are more powerful than the open-sourced Jaeger UI.

All services can also generate events, which are also pushed to ElasticSearch. All events include a trace ID, so the full set of logs associated with the action can be looked up from the event. Examples of events include when new AppInsts are auto-provisioned, or the system detects an AppInst or cloudlet offline. Operators may also have events pushed to an operator-managed Kafka instance.

MC creates audit logs for every user initiated API call, which are just events that also include the user information and the API call and data (minus any sensitive data).

A custom set of APIs allow for searching the ElasticSearch event data.

An admin-only set of APIs allow for running generic “debug commands”. These commands are propagated via the notify framework, and any service that recognizes the command will act upon it and respond. The API allows for targeting the command to a specific type of service, or a specific service by its host name. Services can implement their own debug commands without requiring the API to be updated. This is used for example to dump the contents of internal data, perform some sort of action like refreshing data, or trigger a periodic function immediately.