This is a TensorRT-based SAM3 inference repository (C++ implementation). It currently implements image preprocessing, image encoding, text encoding, decoder decoding, and post-processing processes, supporting multi-text prompt inference for images.

- Uses TensorRT engine

- C++ + CUDA implementation of preprocessing/post-processing kernels, suitable for efficient GPU operation

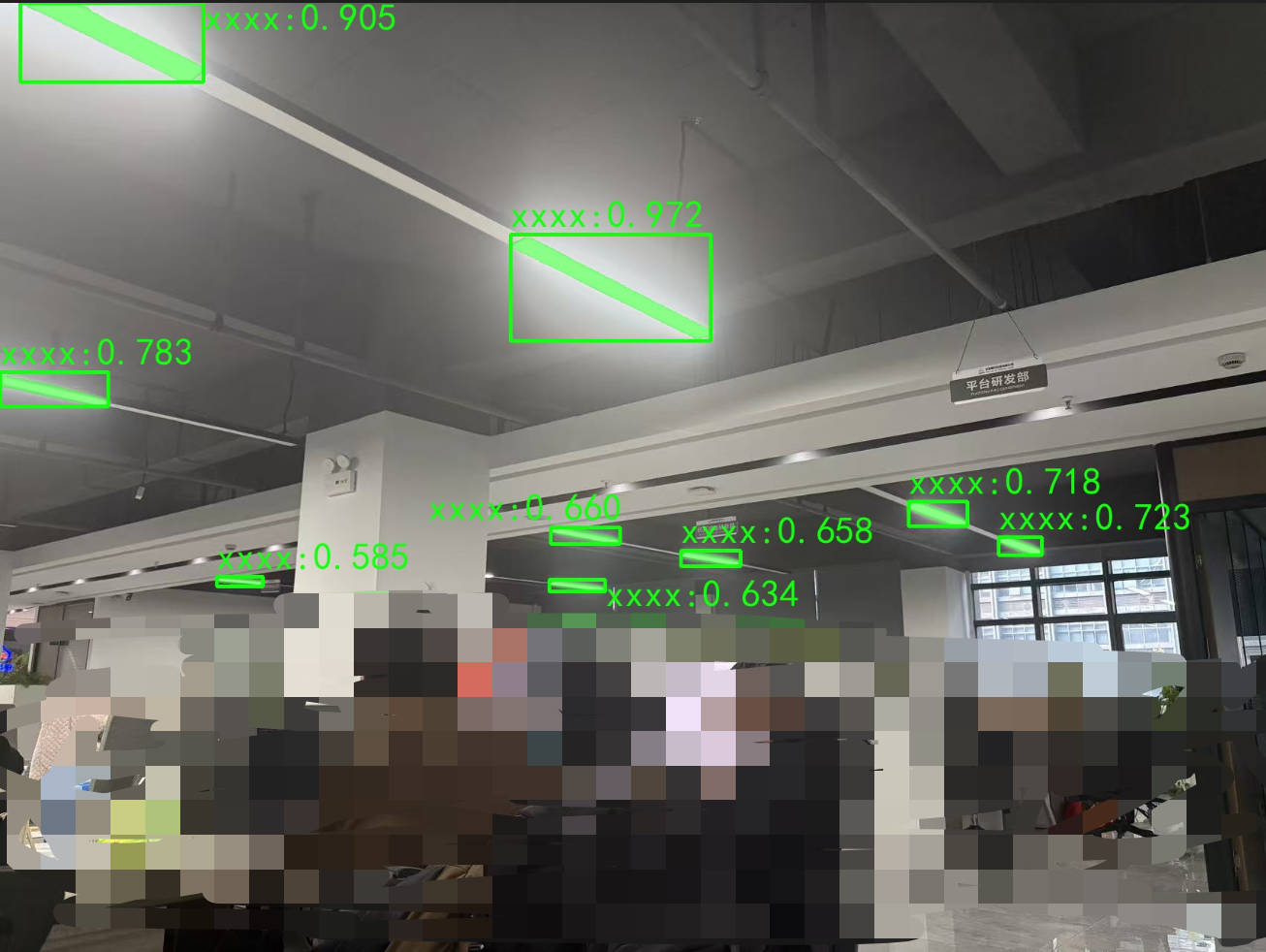

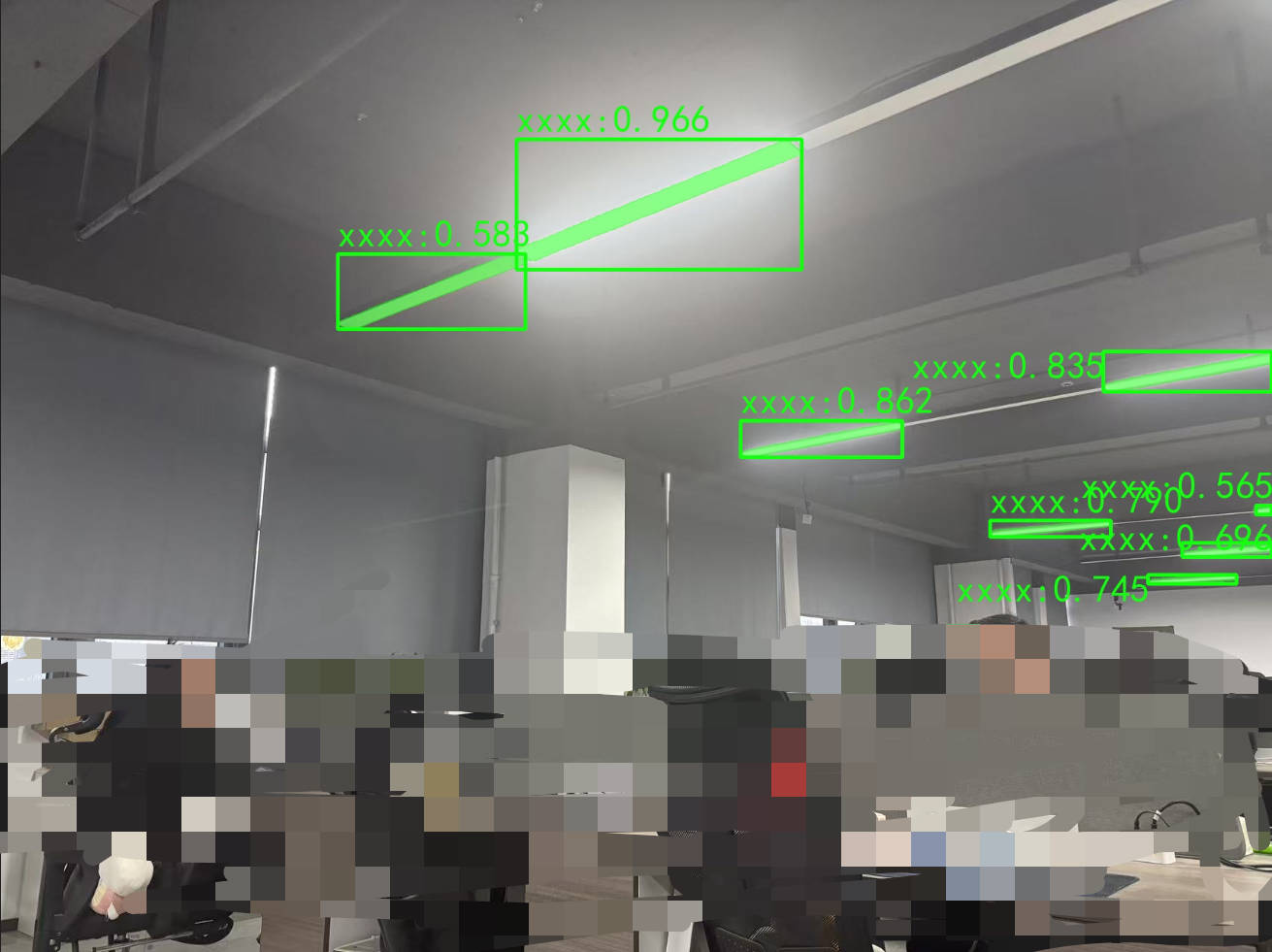

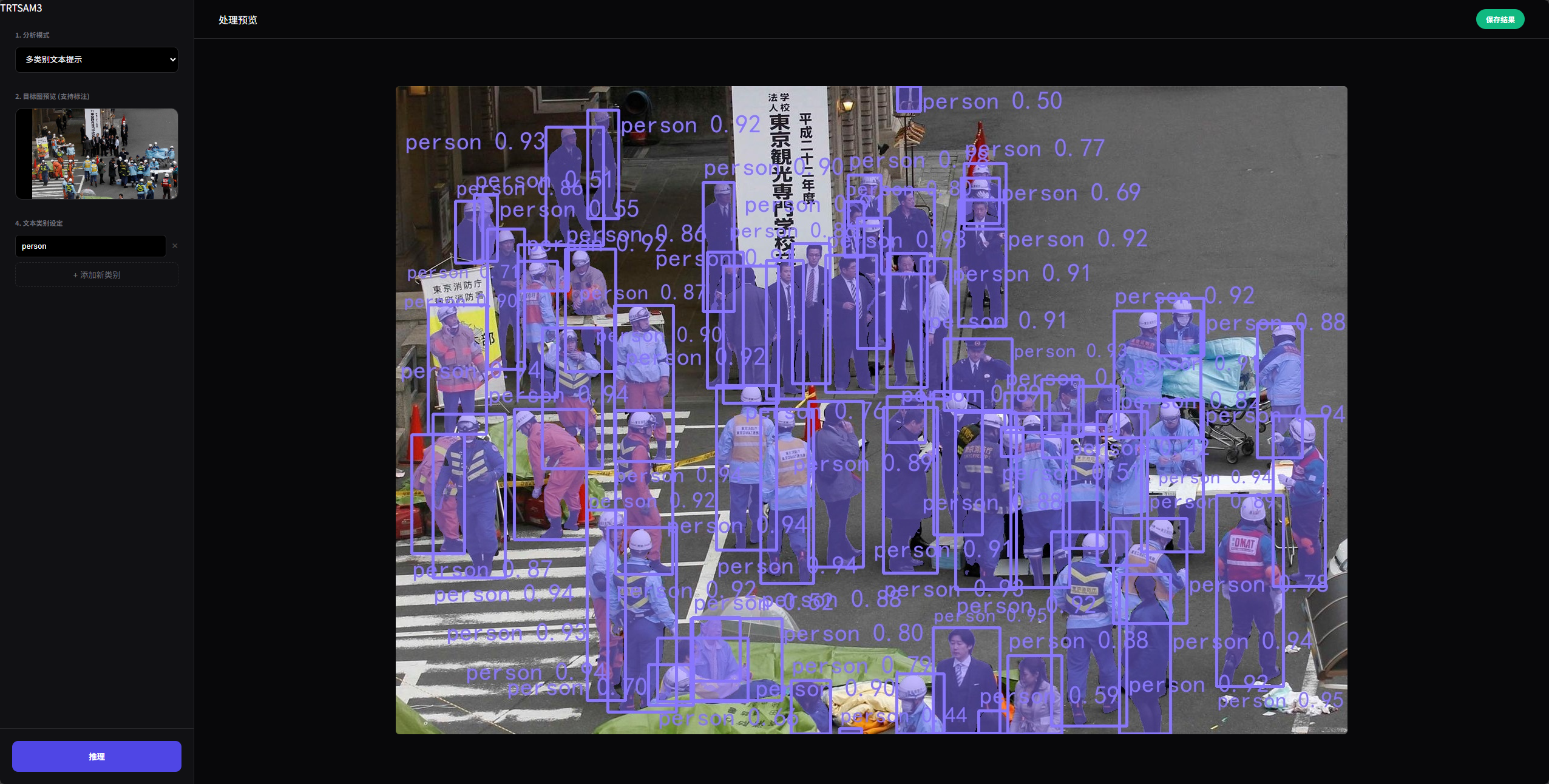

- Supports mask/box output based on text prompts and geometric bounding boxes

- Utilizes batching and memory reuse to simultaneously recognize multiple text prompt categories

- Draw boxes on image A, recognize on image B

-

Refer to the repository below to export ONNX models

https://github.com/jamjamjon/usls.git -

Address of already exported ONNX models

https://huggingface.co/tangliyang/onnx_model_store

- Refer to the repository below to perform int8 quantization on the SAM3 vision encode model https://github.com/NVIDIA/Model-Optimizer/tree/main/examples/windows/onnx_ptq/sam2

- Server

ubuntu 24.04 - GPU NVIDIA GeForce RTX 4090

- Image

nvcr.io/nvidia/tensorrt:25.10-py3

- Multi-word Text Prompts Can simultaneously recognize multiple categories

- Geometric Prompts

- Mixed Prompts

- Prompt boxes on image A, recognition on image B

Around 50ms

cmake .. -DCMAKE_PREFIX_PATH="$(python3 -m pybind11 --cmakedir)"

make -j$(nproc)https://github.com/jamjamjon/usls.git

- This repository is an example for personal/research use, welcome issues.