Creation of a python program using CNN (convolutional Neural Network) and a simple WebCam, that translate eyes gestures to a code that the computer can understand, allowing people to play video games using just their eyes.

AMAZING, don't you think?

This project has been selected as a finalist to be showed on the HackShow event of Ironhack. Update: This project has been the winner of the Ironhack Hackshow contest.

I'm leaving some short videos playing GTA V using my eyes.

getting.the.car.mp4

Using eyes up, to start and stop moving. Eyes left and right to move left and right, and eyes closed to get into the car.

Fighting.and.getting.a.car.mp4

Using wink left to hit and then eyes close to get into the car

Driving.mp4

Winking right to start CAR_MODE, then turning a bit the head or moving the eyes (left,right) to control the car.

I wanted to use my AI knowlege and use everything I learned in the last few months.

Start with an idea in your head , having 10 days to complete it, and do all the process until you achieve kind of a product anybody could use, has been a very good challenge for me, but I have to say I had fun on the way :)

I didn't create this program for lazy people who could play games while they are eating or laying down, I created this program for disabled people who are not able to use their hands to play games. I wanted to give them the feeling of freedom of driving a fast car or to go for a walk in a new city.

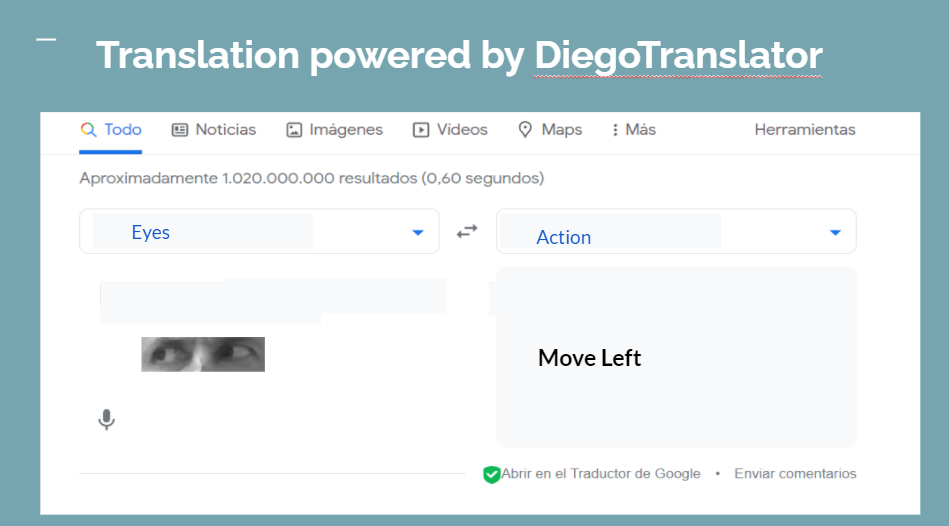

Basically what I built, is a translator that take an eye image as an input and a action on the output. We could say it is something like that:

- The creation of a Neuronal Network , in this case a CNN.

- Using the prediction of the CNN to manage the game.

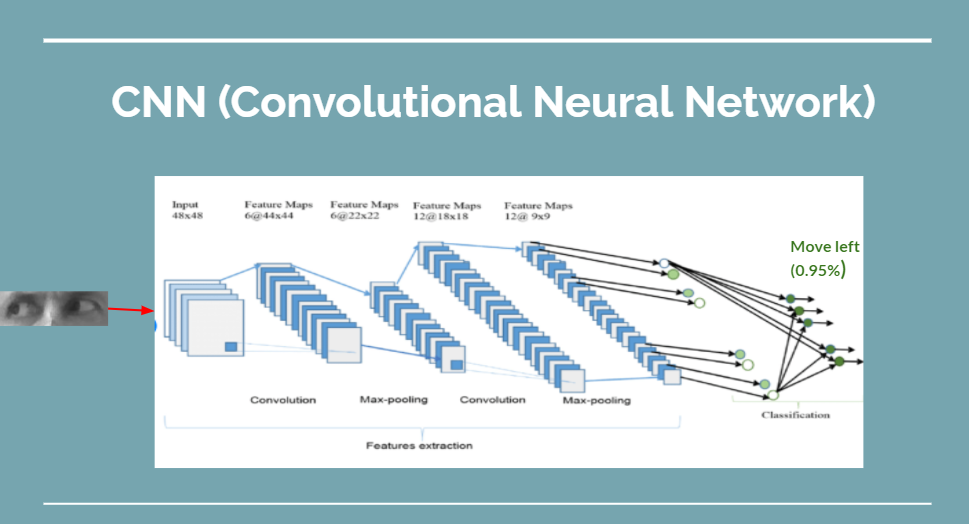

Our CNN is something like this:

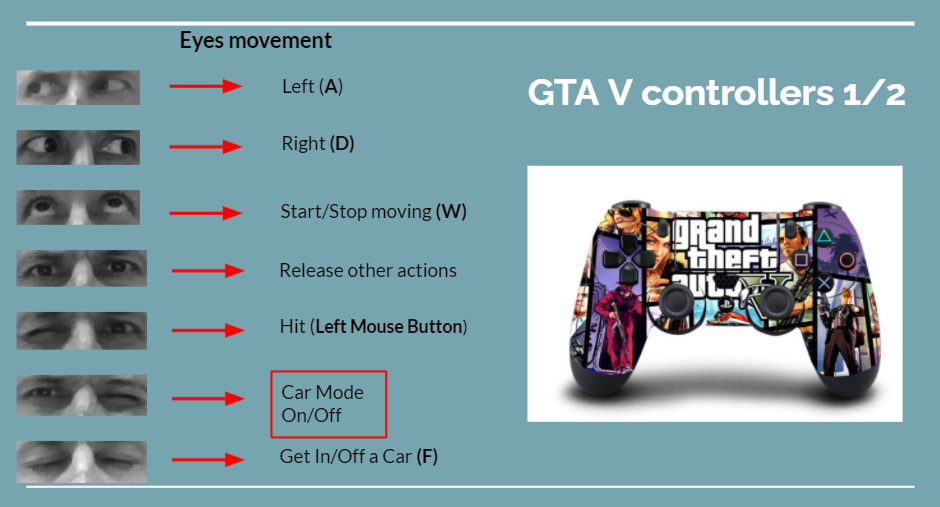

Where we have an image (120,40) as an input and 11 posibles output, 1 for every eye gesture I have created.

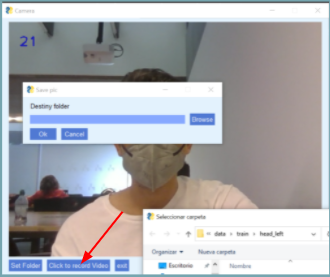

For that I have created a program that take pictures just of the eyes, even if you are moving.

If you are on the screen (webcam) and you start recording, the program will find your eyes, will take pics of them and save them until you stop it. Obviously there is a button to set in what folder you want to save them.

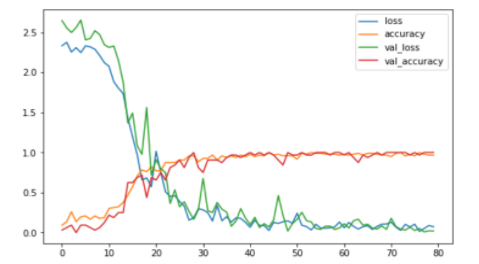

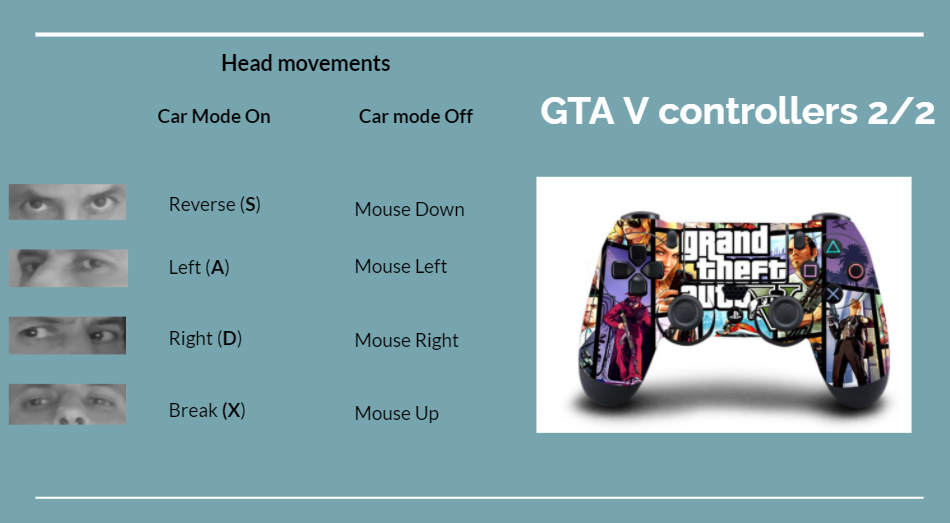

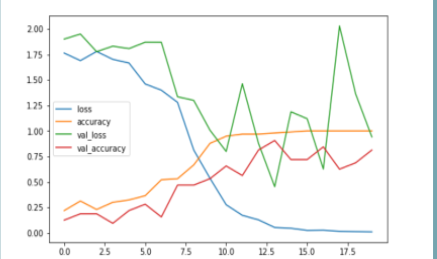

After the first training I didn't have very good results (fitting with around 1000 pics):

But when I started adding more and more pics, everything started to work really well:

- Our program will open the webcam

- For every single frame will do :

- Face detection

- Find the eyes (ROI)

- Give the eye ROI to the CNN

- Get the prediction

- Order an action depending on the prediction (only if we are over a accuracy threshold)

We can see what actions we are able to do on the next section,

-

Creation of a Eye language

-

Tuning the key/mouse parameters (sleep, number of pixels, released …) to make the game smooth

-

Speed of winks/blinks (Creation of a predictions buffer)

-

Light (thousands of pics)

-

Computer performance

-

More research on image normalization for NN (for lighting issues)

-

Create a better eye language.

-

Detect object in the game, and do actions . Like, if I find enemy, shoot him/her automatically.

-

Interface to customize the links eye movements → Keys

-

Customize sensibilities of eyes movement.