Production-grade binary Brain Tumor MRI classification system with Bayesian hyperparameter optimization, MLflow experiment tracking, and automated AWS deployment.

Full system demonstration and architecture walkthrough: youtu.be/GdkqQOeT4nU

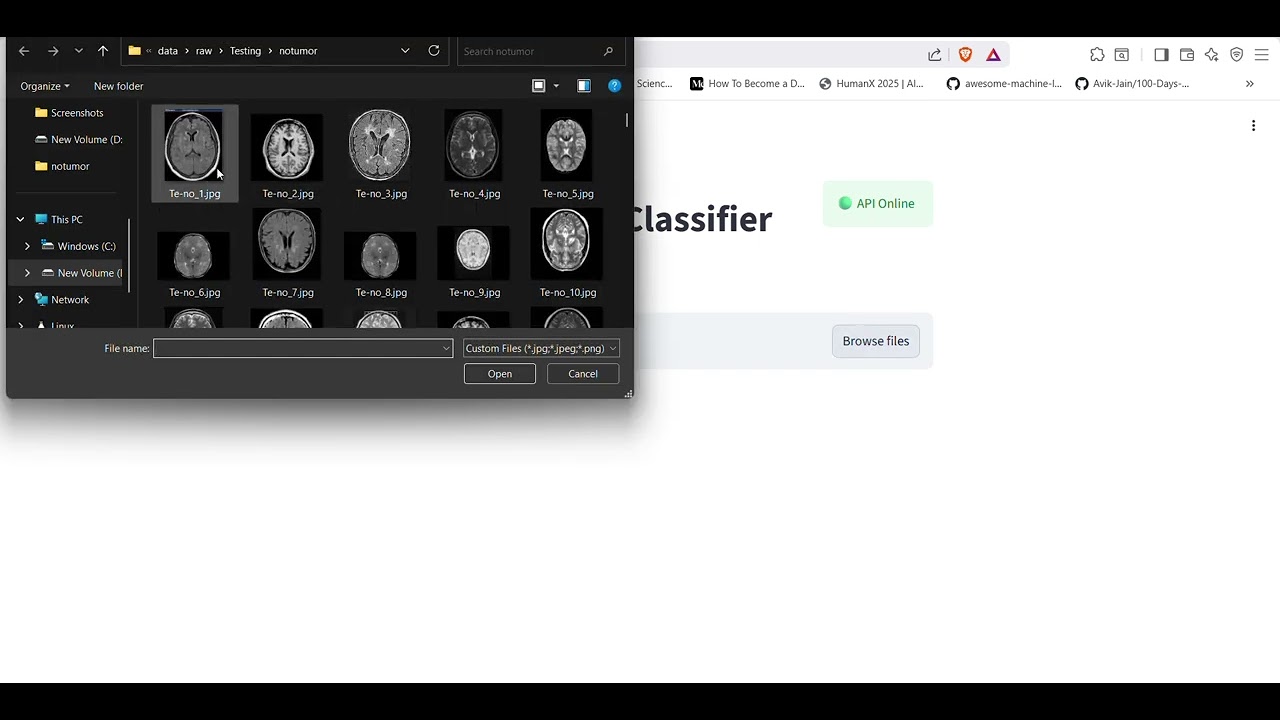

This repository implements a complete ML lifecycle for classifying Brain Tumor MRI scans into Tumor or No Tumor categories. The pipeline spans data ingestion from Kaggle, automated hyperparameter search via Bayesian Optimization, experiment tracking with MLflow, and zero-touch deployment to AWS ECS Fargate through GitHub Actions. The inference service exposes a FastAPI REST endpoint consumed by a local Streamlit client for verification.

The original dataset contains four classes (glioma, meningioma, pituitary, notumor). The DataValidator in src/data_pipeline/data_validation.py maps these to a binary label scheme: all tumor subtypes collapse to class 1, notumor maps to class 0.

The model is trained on the publicly available Brain Tumor MRI Dataset, curated for deep-learning-based tumor classification from MRI scans.

Source: Brain Tumor MRI Dataset on Kaggle

| Property | Value |

|---|---|

| Total images | 7,023 |

| Raw classes | glioma, meningioma, pituitary, notumor |

| Binary mapping | notumor = 0, all tumors = 1 |

| Image format | JPEG, resized to 224×224×3 |

The dataset is divided into training, validation, and test sets using a two-stage splitting approach implemented in src/data_pipeline/preprocessing.py:

- Kaggle Native Split — The raw dataset ships with pre-defined

Training/andTesting/directories. These directories are detected automatically byDataValidatorbased on the filepath. - Validation Carve-Out — 20% of the

Training/images are randomly sampled to form the validation set usingpandas.DataFrame.sample(frac=0.2, random_state=42). The remaining 80% becomes the final training set. - Test Set — The entire

Testing/directory is used as the hold-out test set, kept completely untouched during training and hyperparameter tuning.

| Split | Source | Approx. Images | Augmentation | Shuffled |

|---|---|---|---|---|

| Training | 80% of Training/ folder |

~4,480 | ✅ Random flip, brightness, contrast | ✅ |

| Validation | 20% of Training/ folder |

~1,120 | ❌ | ❌ |

| Test | Entire Testing/ folder |

~1,600 | ❌ | ❌ |

Reproducibility: The validation split is deterministic —

random_state=42ensures the same images are selected every run. Global seeds fortensorflow,numpy, andrandom(all set to 42) further guarantee reproducible data ordering.

Data is version-controlled using DVC. The data.dvc pointer file tracks the exact dataset hash used for each training run.

- Bayesian Hyperparameter Optimization: 10-trial search over learning rate, dropout, dense units, L2 regularization, and backbone unfreezing depth using KerasTuner.

- Experiment Tracking and Model Registry: Every trial and the final optimized run are logged to MLflow with dataset fingerprints (SHA-256), parameters, and evaluation artifacts.

- Data Version Control: DVC anchors each model version to a specific dataset hash, enabling full lineage tracking.

- Cloud-Native Inference: Containerized FastAPI service on AWS ECS Fargate with automatic S3-to-local model fallback on cold starts.

- Structured Observability: JSON-formatted request and inference logs via

structlog, piped to CloudWatch in production.

Evaluated on the hold-out test set (1,600 images). Threshold optimized via precision-recall curve analysis in ModelEvaluator.

Accuracy: 98.19%

| Metric | Class 0 (No Tumor) | Class 1 (Tumor) |

|---|---|---|

| Precision | 0.9744 | 0.9843 |

| Recall | 0.9525 | 0.9917 |

| F1-Score | 0.9633 | 0.9880 |

Macro F1: 0.9756 | Weighted F1: 0.9818

| Predicted Negative | Predicted Positive | |

|---|---|---|

| Actual Negative | 381 (TN) | 19 (FP) |

| Actual Positive | 10 (FN) | 1,190 (TP) |

The false negative rate of 0.83% is critical for a diagnostic support system where missed tumors carry the highest risk.

The model uses an EfficientNetV2-S backbone with a custom classification head. Training operates under mixed-precision (float16) to optimize memory and throughput. Convergence is managed through EarlyStopping (patience=5, monitoring val_auc) and ModelCheckpoint (save best only).

Fine-tuning runs for up to 20 epochs with a learning rate decayed to 1/10th of the Bayesian-selected optimum. Inverse-frequency class weighting compensates for the 3:1 tumor-to-normal imbalance.

Architecture search executed via keras_tuner.BayesianOptimization with val_auc as the maximization objective. 10 trials were evaluated, each running for up to 5 epochs with early stopping (patience=2).

Best Trial: 09

| Parameter | Search Range | Optimal Value |

|---|---|---|

| Learning Rate | 1e-5 to 1e-2 (log) | 9.77e-05 |

| Unfrozen Backbone Layers | 0 to 50 (step 10) | 30 |

| Dense Units | 128 to 512 (step 128) | 256 |

| Dropout Rate | 0.2 to 0.7 (step 0.1) | 0.4 |

| L2 Regularization | 1e-5 to 1e-2 (log) | 0.00224 |

Trial 09 Validation Metrics:

- Accuracy: 99.11%

- AUC: 0.9998

- Best Epoch: 4

The aggressive unfreezing of 30 backbone layers combined with moderate regularization (dropout 0.4, L2 0.00224) enabled the model to adapt ImageNet features to the MRI domain without overfitting. Configuration stored in artifacts/tuner/mri_brain_tuner/trial_09/trial.json.

All training runs are tracked in the local MLflow server under the brain-tumor-classification experiment. Each tuner trial logs its hyperparameters and val_auc as a separate run. The final optimized model is archived with its dataset SHA-256 hash, evaluation metrics, confusion matrix JSON, and classification report.

graph TD

A["Kaggle MRI Dataset"] --> B["Data Pipeline"]

B --> C["Training Pipeline + Bayesian Tuning"]

C --> D["artifacts/best_model.keras"]

D --> E["upload_model.py"]

E --> F["AWS S3 Model Registry"]

F --> G["GitHub Actions"]

G --> H["Docker Build"]

H --> I["AWS ECR"]

I --> J["AWS ECS Fargate"]

J --> K["FastAPI /predict"]

L["Streamlit Client"] --> K

Detailed component-level architecture is documented in docs/architecture_diagram.mmd.

The InferencePipeline class (src/inference_pipeline/infer.py) manages the complete prediction lifecycle:

- Model Loading: On startup,

InferencePipelineloadsbest_model.kerasfrom the local filesystem. If the file is absent (ECS cold start), it automatically downloads from S3 usingboto3. - Preprocessing: Raw image bytes are decoded, resized to 224x224, and scaled using

tf.keras.applications.efficientnet_v2.preprocess_inputto match the training pipeline exactly. - Prediction: Single forward pass through the loaded Keras model. The sigmoid output is compared against the configurable

confidence_threshold(default: 0.5). - Response: Standardized JSON containing

label,probability, andclass_idx.

| Stage | Tool | Implementation |

|---|---|---|

| Data Versioning | DVC | data.dvc tracks dataset hash; dvc pull restores exact training data |

| Experiment Tracking | MLflow | Per-trial params, metrics, and model artifacts logged and registered |

| Model Storage | AWS S3 | upload_model.py pushes best_model.keras to the central registry |

| Containerization | Docker | Multi-dependency python:3.12-slim image built from infra/Dockerfile |

| Deployment | ECS Fargate | Zero-downtime rolling update triggered by GitHub Actions |

| Monitoring | structlog + CloudWatch | JSON logs streamed via awslogs driver from ECS tasks |

.

├── apps/

│ └── streamlit_app.py # Local inference test client

├── artifacts/

│ ├── eval/ # confusion_matrix.json, report.json

│ ├── plots/ # cm.png, training_curves.png

│ ├── tuner/ # KerasTuner trial configs and weights

│ └── best_model.keras # Final trained model checkpoint

├── configs/

│ └── model_config.yaml # Model architecture and data mapping config

├── docs/

│ ├── images/ # README screenshots and diagrams

│ ├── architecture_diagram.mmd # Mermaid system architecture

│ ├── setup_guide.md # Environment setup instructions

│ └── troubleshooting.md # Real-world debugging reference

├── infra/

│ ├── Dockerfile # Production inference container

│ ├── provision.sh # One-time AWS resource provisioning

│ └── task_definition.json # ECS Fargate task configuration

├── src/

│ ├── api/

│ │ ├── app.py # FastAPI application (/health, /predict)

│ │ └── middleware.py # Request/response logging middleware

│ ├── common/

│ │ ├── config.py # Pydantic settings loaded from .env + YAML

│ │ ├── logging.py # structlog configuration

│ │ └── utils.py # Hashing and DVC utilities

│ ├── data_pipeline/

│ │ ├── data_ingestion.py # Kaggle API dataset download

│ │ ├── data_validation.py # 4-class to binary label mapping

│ │ └── preprocessing.py # tf.data pipeline with augmentation

│ ├── inference_pipeline/

│ │ └── infer.py # Model loading (S3 fallback) + prediction

│ └── training_pipeline/

│ ├── build_model.py # EfficientNetV2-S architecture construction

│ ├── evaluate.py # Threshold optimization + metrics + artifacts

│ ├── mlflow_tracking.py # MLflow logging service

│ ├── train.py # Master orchestrator (full lifecycle)

│ └── tuner.py # Bayesian hyperparameter search

├── tests/

│ ├── test_api.py # FastAPI endpoint integration tests

│ └── test_model.py # Model architecture unit tests

├── .github/workflows/

│ └── deploy.yml # CI/CD pipeline (ECR + ECS deployment)

├── upload_model.py # S3 model upload utility

├── data.dvc # DVC dataset pointer

├── requirements.txt # Full dependency manifest

└── .env.example # Environment variable template

| Component | Technology | Rationale |

|---|---|---|

| Model | TensorFlow / Keras | Mature transfer learning ecosystem with production-grade serving support |

| Backbone | EfficientNetV2-S | Parameter-efficient architecture with strong ImageNet pretraining |

| HP Search | KerasTuner (Bayesian) | Sample-efficient search compared to random/grid, converges in fewer trials |

| Tracking | MLflow | Centralized experiment tracking with model registry and artifact storage |

| Data Versioning | DVC | Handles large binary datasets that cannot be stored in Git |

| API | FastAPI | Low-latency async inference with automatic OpenAPI documentation |

| Runtime | Docker | Reproducible environment from WSL2 development to AWS production |

| Model Store | AWS S3 | Durable storage for multi-hundred MB model artifacts |

| Container Registry | AWS ECR | Private registry with high-speed pulls within the AWS VPC |

| Compute | AWS ECS Fargate | Serverless containers eliminating instance management overhead |

| CI/CD | GitHub Actions | Event-driven automation on push to main |

| Testing UI | Streamlit | Lightweight local client for manual verification of deployed API |

| Logging | structlog | Machine-readable JSON output compatible with CloudWatch and ELK |

Full setup instructions: docs/setup_guide.md

# Create and activate environment

python -m venv venv_deploy

source venv_deploy/bin/activate

# Install dependencies

pip install -r requirements.txt

# Configure secrets

cp .env.example .env

# Fill in AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY, KAGGLE_USERNAME, KAGGLE_KEY

# Run training pipeline

python -m src.training_pipeline.train

# Run Streamlit client

streamlit run apps/streamlit_app.pyThe deployment strategy uses rolling updates on AWS ECS Fargate. Infrastructure is provisioned once via infra/provision.sh, which creates the S3 bucket, ECR repository, ECS cluster, task definition, and service.

Model upload: python upload_model.py --> S3

Docker build: infra/Dockerfile --> python:3.12-slim image

Image registry: docker push --> AWS ECR

Task update: aws ecs update-service --> ECS Fargate (zero-downtime)

The Dockerfile is intentionally minimal: it copies only src/, configs/, and artifacts/best_model.keras into the production image.

The .github/workflows/deploy.yml workflow automates deployment on every push to main:

- Checkout repository.

- Configure AWS credentials from GitHub Secrets.

- Login to Amazon ECR.

- Download

best_model.kerasfrom S3. - Build Docker image tagged with commit SHA and

latest. - Push to ECR.

- Register new ECS task definition with updated image URI.

- Update ECS service with

--force-new-deployment. - Wait for

services-stableconfirmation. - Output live public IP endpoint.

Tests are located in tests/ and executed via pytest.

| Test File | Coverage | Assertions |

|---|---|---|

test_model.py |

Model architecture | Output shape is (None, 1), optimizer is AdamW, backbone is frozen when unfreeze_layers=0 |

test_api.py |

API endpoints | /health returns 200 with status and model_loaded fields; /predict returns 422 without file upload |

Logging is configured in src/common/logging.py using structlog. The system automatically selects JSON output in headless environments (production) and color-coded console output in interactive terminals.

The FastAPI middleware in src/api/middleware.py logs every request's method, path, and response status code. In AWS, these logs are routed to CloudWatch via the awslogs driver configured in infra/task_definition.json.

Reproducibility is enforced at three levels:

- Data:

data.dvclocks the dataset to a specific MD5 hash. Runningdvc pullrestores the exact training set. - Randomness: Global seeds are set for

tensorflow,numpy, andrandom(seed=42) in bothtrain.pyandtuner.py. - Configuration: All hyperparameters and data mappings are externalized in

configs/model_config.yamland.env.

- No credentials in source control:

.envis excluded via.gitignore. Only.env.example(with placeholder values) is committed. - Runtime injection:

pydantic-settingsloads credentials from.envlocally and from environment variables in ECS. - CI/CD secrets: AWS credentials are stored in GitHub Secrets and injected into the workflow at runtime.

- Network isolation: ECS tasks run in the default VPC with public IP assignment for inference endpoints.

- Binary scope: The current model classifies Tumor vs. No Tumor only. It does not distinguish tumor subtypes.

- CPU inference: The production deployment uses CPU-only TensorFlow. GPU acceleration would require migrating to SageMaker or GPU-enabled ECS instances.

- No model monitoring: There is no automated drift detection or performance degradation alerting in the current deployment.

- Single model version: The S3 registry stores only

latest; there is no A/B testing or canary deployment infrastructure.

- Grad-CAM integration for visual model explainability on MRI scans.

- Multi-class expansion to distinguish glioma, meningioma, and pituitary tumors.

- Automated data drift monitoring and model retraining triggers.

- A/B deployment support with traffic splitting between model versions.

- SageMaker migration for GPU-accelerated batch inference.

- Dataset: Brain Tumor MRI Dataset (Kaggle)

- Project Demo: YouTube Walkthrough

- Troubleshooting:

docs/troubleshooting.md - Setup Guide:

docs/setup_guide.md - Architecture:

docs/architecture_diagram.mmd

This project is licensed under the MIT License.