Generative Pre-trained Transformer 1 (GPT-1)

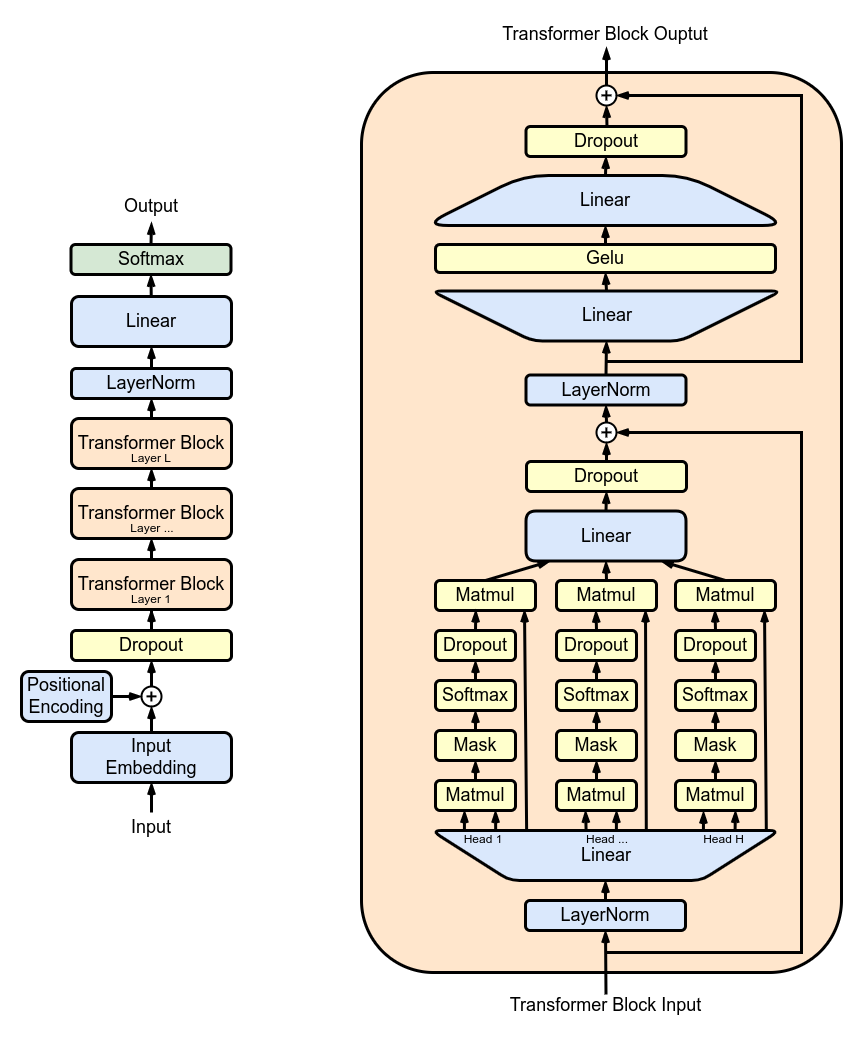

The GPT-1 architecture is a twelve-layer decoder-only transformer, utilizing twelve masked self-attention heads, with 64-dimensional states each (for a total of 768). The model utilizes the Adam optimization algorithm, diverging from simple stochastic gradient descent; the learning rate was progressively increased from zero over the first 2,000 updates to a peak of 2.5×10−4, followed by annealing to 0 using a cosine schedule.

GPT-1 was trained on the BooksCorpus dataset, containing over 7,000 unique unpublished books, amounting to nearly 800 million words. This extensive corpus provided a diverse range of vocabulary, narrative styles, and topics, enabling the model to develop a broad understanding of language patterns and structures.

GPT-1 showcased remarkable capabilities in various natural language processing tasks, such as:

- Language modeling

- Machine translation

- Text summarization

- Question answering

The model's performance was particularly notable in tasks requiring contextual understanding and the generation of coherent, contextually relevant text.

This repository provides implementation details and resources for GPT-1. Users can utilize this model for various NLP tasks, adapting it to specific requirements and datasets.

Additional documentation is available in MODEL.md, DATASET.md, and PROGRAM_FLOW.md.

See SETUP.md for environment setup and training instructions. Once dependencies are installed you can pretrain the model with:

python train.py -c conf/pretrain.ymlAfter training you can generate text with:

python generate.py -l 100Details about necessary prerequisites, including software and hardware requirements.

Step-by-step guide to installing and configuring GPT-1 on your system.

We welcome contributions from the community. Please refer to the CONTRIBUTING.md for guidelines on how to contribute.

For the versions available, see the tags on this repository.

- [Your Name] - Mind-Interfaces/GPT-1/

- Akshat Pandey - Pytorch implementation of GPT-1

- Yu Guo - GPT-1 结构的简单复现

- Sosuke Kobayashi - Homemade BookCorpus

- Acknowledgements to anyone whose resources were used

This project is licensed under MIT - see the LICENSE file for details.