diff --git a/README.md b/README.md

index 84d625f..4b80899 100644

--- a/README.md

+++ b/README.md

@@ -1,26 +1,40 @@

# Malicious Containers Workshop

-This repository contains the slides and accompanying lab materials for the workshops delivered at DefCon and other conferences. The most recent being [CactusCon](CactusCon_24/README.md). Each conferences materials will be located in their respective sub-folders.

+A hands-on workshop covering Kubernetes and container security — from offensive techniques to detection and response. Learn to build, deploy, and detect malicious containers in a safe lab environment.

+## Repository Structure

-## Past Workshops

+- **`current/`** — The latest version of the workshop materials, labs, and infrastructure setup. Start here.

+- **`archived/`** — Previous conference-specific versions (DEF CON 30, DEF CON 31, BSides Charleston, CactusCon, ISSA Triad). Preserved for reference.

-The repository also contains past versions of the course, such as the original [Workshop delivered at DEFCON 30 - Creating and Uncovering Malicious Containers](https://forum.defcon.org/node/241774), [DEFCON 31 - Creating and uncovering malicious containers: Redux](https://forum.defcon.org/node/246020) and iterations delivered at [BSides Charleston 22](https://bsideschs.ticketbud.com/ws-creating), [BSides Charleston 23](https://bsideschs.ticketbud.com/ws-malkub), [CactusCon](https://www.cactuscon.com/cc12-schedule) and [ISSA Triad 2023 Security Summit](https://triadnc.issa.org/). As well as any versions to be delivered in the future as we continue to update and improve it or offer it at other events.

+## Getting Started

+See [`current/README.md`](current/README.md) for an overview and [`current/lab-setup.md`](current/lab-setup.md) for environment setup instructions.

+

+## Workshop Modules

+

+1. **Docker Fundamentals** — Images, containers, layers, process hierarchy

+2. **Exploring Containers** — Image history, reverse engineering, extracting artifacts

+3. **Offensive Docker Techniques** — Data exfiltration, socket hijacking, privilege escalation

+4. **Container IR** — Image forensics CTF, cleanup

+5. **Kubernetes 101** — Architecture, components, networking

+6. **Kubernetes Security** — RBAC abuse, privilege escalation, golden ticket attacks, evil pods

+7. **Supply Chain Security** — Image signing (cosign/Sigstore), SBOMs (syft), vulnerability scanning (grype), provenance

+8. **Modern Runtime Security** — Tracee, Falco, Tetragon — eBPF-based detection and comparison

+9. **Cloud-Native Attacks** — IMDS exploitation, workload identity abuse, network policy bypass

## Presenters

### Instructor: David Mitchell

-

->  \

-> https://github.com/digital-shokunin/digital-shokunin/README.md

-

-### Instructor: Adrian Wood

-

->

\

-> https://github.com/digital-shokunin/digital-shokunin/README.md

-

-### Instructor: Adrian Wood

-

->  \

-> https://keybase.io/threlfall

+> [@digish0](https://twitter.com/digish0) | https://digital-shokunin.net

+### Instructor: Adrian Wood

+> [@whitehacksec](https://twitter.com/WHITEHACKSEC) | https://5stars217.github.io

## Our lockpick/hacker(space) group

-[](https://github.com/lockFALE/)

+[](https://github.com/lockFALE/)

+

+## License

+

+See [LICENSE](LICENSE).

diff --git a/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf b/archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf

similarity index 100%

rename from BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf

rename to archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf

diff --git a/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf b/archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf

similarity index 100%

rename from BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf

rename to archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf

diff --git a/BSides_Charleston_23/Lab Setup.md b/archived/BSides_Charleston_23/Lab Setup.md

similarity index 100%

rename from BSides_Charleston_23/Lab Setup.md

rename to archived/BSides_Charleston_23/Lab Setup.md

diff --git a/BSides_Charleston_23/README.md b/archived/BSides_Charleston_23/README.md

similarity index 100%

rename from BSides_Charleston_23/README.md

rename to archived/BSides_Charleston_23/README.md

diff --git a/BSides_Charleston_23/cheatsheet.md b/archived/BSides_Charleston_23/cheatsheet.md

similarity index 100%

rename from BSides_Charleston_23/cheatsheet.md

rename to archived/BSides_Charleston_23/cheatsheet.md

diff --git a/BSides_Charleston_23/grafana/tracee-dashboard.json b/archived/BSides_Charleston_23/grafana/tracee-dashboard.json

similarity index 100%

rename from BSides_Charleston_23/grafana/tracee-dashboard.json

rename to archived/BSides_Charleston_23/grafana/tracee-dashboard.json

diff --git a/BSides_Charleston_23/helm-config/grafana-config.yaml b/archived/BSides_Charleston_23/helm-config/grafana-config.yaml

similarity index 100%

rename from BSides_Charleston_23/helm-config/grafana-config.yaml

rename to archived/BSides_Charleston_23/helm-config/grafana-config.yaml

diff --git a/BSides_Charleston_23/helm-config/promtail-config.yaml b/archived/BSides_Charleston_23/helm-config/promtail-config.yaml

similarity index 100%

rename from BSides_Charleston_23/helm-config/promtail-config.yaml

rename to archived/BSides_Charleston_23/helm-config/promtail-config.yaml

diff --git a/BSides_Charleston_23/image.png b/archived/BSides_Charleston_23/image.png

similarity index 100%

rename from BSides_Charleston_23/image.png

rename to archived/BSides_Charleston_23/image.png

diff --git a/BSides_Charleston_23/k8s-ansible-setup.yml b/archived/BSides_Charleston_23/k8s-ansible-setup.yml

similarity index 100%

rename from BSides_Charleston_23/k8s-ansible-setup.yml

rename to archived/BSides_Charleston_23/k8s-ansible-setup.yml

diff --git a/BSides_Charleston_23/k8s-manifests/attacker-pod.yaml b/archived/BSides_Charleston_23/k8s-manifests/attacker-pod.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/attacker-pod.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/attacker-pod.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml b/archived/BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/clusterroles.yaml b/archived/BSides_Charleston_23/k8s-manifests/clusterroles.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/clusterroles.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/clusterroles.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/configmaps.yaml b/archived/BSides_Charleston_23/k8s-manifests/configmaps.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/configmaps.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/configmaps.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/daemonsets.yaml b/archived/BSides_Charleston_23/k8s-manifests/daemonsets.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/daemonsets.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/daemonsets.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/deployments.yaml b/archived/BSides_Charleston_23/k8s-manifests/deployments.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/deployments.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/deployments.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/evilpod.yaml b/archived/BSides_Charleston_23/k8s-manifests/evilpod.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/evilpod.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/evilpod.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/ingress.yaml b/archived/BSides_Charleston_23/k8s-manifests/ingress.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/ingress.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/ingress.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/namespaces.yaml b/archived/BSides_Charleston_23/k8s-manifests/namespaces.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/namespaces.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/namespaces.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml b/archived/BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/pods.yaml b/archived/BSides_Charleston_23/k8s-manifests/pods.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/pods.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/pods.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/rolebindings.yaml b/archived/BSides_Charleston_23/k8s-manifests/rolebindings.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/rolebindings.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/rolebindings.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/roles.yaml b/archived/BSides_Charleston_23/k8s-manifests/roles.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/roles.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/roles.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/secrets.yaml b/archived/BSides_Charleston_23/k8s-manifests/secrets.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/secrets.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/secrets.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml b/archived/BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/services.yaml b/archived/BSides_Charleston_23/k8s-manifests/services.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/services.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/services.yaml

diff --git a/BSides_Charleston_23/kind-lab-config.yaml b/archived/BSides_Charleston_23/kind-lab-config.yaml

similarity index 100%

rename from BSides_Charleston_23/kind-lab-config.yaml

rename to archived/BSides_Charleston_23/kind-lab-config.yaml

diff --git a/BSides_Charleston_23/lab-ansible-setup.yml b/archived/BSides_Charleston_23/lab-ansible-setup.yml

similarity index 100%

rename from BSides_Charleston_23/lab-ansible-setup.yml

rename to archived/BSides_Charleston_23/lab-ansible-setup.yml

diff --git a/BSides_Charleston_23/labs_walk_thru.md b/archived/BSides_Charleston_23/labs_walk_thru.md

similarity index 100%

rename from BSides_Charleston_23/labs_walk_thru.md

rename to archived/BSides_Charleston_23/labs_walk_thru.md

diff --git a/BSides_Charleston_23/scripts/reverse_shell_handler.py b/archived/BSides_Charleston_23/scripts/reverse_shell_handler.py

similarity index 100%

rename from BSides_Charleston_23/scripts/reverse_shell_handler.py

rename to archived/BSides_Charleston_23/scripts/reverse_shell_handler.py

diff --git a/Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf b/archived/Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf

similarity index 100%

rename from Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf

rename to archived/Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf

diff --git a/Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf b/archived/Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf

similarity index 100%

rename from Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf

rename to archived/Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf

diff --git a/Bsides_Charleston_22/Lab Setup.md b/archived/Bsides_Charleston_22/Lab Setup.md

similarity index 100%

rename from Bsides_Charleston_22/Lab Setup.md

rename to archived/Bsides_Charleston_22/Lab Setup.md

diff --git a/Bsides_Charleston_22/cheatsheet.md b/archived/Bsides_Charleston_22/cheatsheet.md

similarity index 100%

rename from Bsides_Charleston_22/cheatsheet.md

rename to archived/Bsides_Charleston_22/cheatsheet.md

diff --git a/Bsides_Charleston_22/kind-lab-config.yaml b/archived/Bsides_Charleston_22/kind-lab-config.yaml

similarity index 100%

rename from Bsides_Charleston_22/kind-lab-config.yaml

rename to archived/Bsides_Charleston_22/kind-lab-config.yaml

diff --git a/Bsides_Charleston_22/labs_walk_thru.md b/archived/Bsides_Charleston_22/labs_walk_thru.md

similarity index 100%

rename from Bsides_Charleston_22/labs_walk_thru.md

rename to archived/Bsides_Charleston_22/labs_walk_thru.md

diff --git a/Bsides_Charleston_22/readme.md b/archived/Bsides_Charleston_22/readme.md

similarity index 100%

rename from Bsides_Charleston_22/readme.md

rename to archived/Bsides_Charleston_22/readme.md

diff --git a/CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf b/archived/CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf

similarity index 100%

rename from CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf

rename to archived/CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf

diff --git a/CactusCon_24/Lab Setup.md b/archived/CactusCon_24/Lab Setup.md

similarity index 100%

rename from CactusCon_24/Lab Setup.md

rename to archived/CactusCon_24/Lab Setup.md

diff --git a/CactusCon_24/README.md b/archived/CactusCon_24/README.md

similarity index 100%

rename from CactusCon_24/README.md

rename to archived/CactusCon_24/README.md

diff --git a/CactusCon_24/cheatsheet.md b/archived/CactusCon_24/cheatsheet.md

similarity index 100%

rename from CactusCon_24/cheatsheet.md

rename to archived/CactusCon_24/cheatsheet.md

diff --git a/CactusCon_24/grafana/tracee-dashboard.json b/archived/CactusCon_24/grafana/tracee-dashboard.json

similarity index 100%

rename from CactusCon_24/grafana/tracee-dashboard.json

rename to archived/CactusCon_24/grafana/tracee-dashboard.json

diff --git a/CactusCon_24/helm-config/grafana-config.yaml b/archived/CactusCon_24/helm-config/grafana-config.yaml

similarity index 100%

rename from CactusCon_24/helm-config/grafana-config.yaml

rename to archived/CactusCon_24/helm-config/grafana-config.yaml

diff --git a/CactusCon_24/helm-config/promtail-config.yaml b/archived/CactusCon_24/helm-config/promtail-config.yaml

similarity index 100%

rename from CactusCon_24/helm-config/promtail-config.yaml

rename to archived/CactusCon_24/helm-config/promtail-config.yaml

diff --git a/CactusCon_24/image.png b/archived/CactusCon_24/image.png

similarity index 100%

rename from CactusCon_24/image.png

rename to archived/CactusCon_24/image.png

diff --git a/CactusCon_24/k8s-ansible-setup.yml b/archived/CactusCon_24/k8s-ansible-setup.yml

similarity index 100%

rename from CactusCon_24/k8s-ansible-setup.yml

rename to archived/CactusCon_24/k8s-ansible-setup.yml

diff --git a/CactusCon_24/k8s-manifests/attacker-pod.yaml b/archived/CactusCon_24/k8s-manifests/attacker-pod.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/attacker-pod.yaml

rename to archived/CactusCon_24/k8s-manifests/attacker-pod.yaml

diff --git a/CactusCon_24/k8s-manifests/clusterrolebindings.yaml b/archived/CactusCon_24/k8s-manifests/clusterrolebindings.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/clusterrolebindings.yaml

rename to archived/CactusCon_24/k8s-manifests/clusterrolebindings.yaml

diff --git a/CactusCon_24/k8s-manifests/clusterroles.yaml b/archived/CactusCon_24/k8s-manifests/clusterroles.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/clusterroles.yaml

rename to archived/CactusCon_24/k8s-manifests/clusterroles.yaml

diff --git a/CactusCon_24/k8s-manifests/configmaps.yaml b/archived/CactusCon_24/k8s-manifests/configmaps.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/configmaps.yaml

rename to archived/CactusCon_24/k8s-manifests/configmaps.yaml

diff --git a/CactusCon_24/k8s-manifests/daemonsets.yaml b/archived/CactusCon_24/k8s-manifests/daemonsets.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/daemonsets.yaml

rename to archived/CactusCon_24/k8s-manifests/daemonsets.yaml

diff --git a/CactusCon_24/k8s-manifests/deployments.yaml b/archived/CactusCon_24/k8s-manifests/deployments.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/deployments.yaml

rename to archived/CactusCon_24/k8s-manifests/deployments.yaml

diff --git a/CactusCon_24/k8s-manifests/evilpod.yaml b/archived/CactusCon_24/k8s-manifests/evilpod.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/evilpod.yaml

rename to archived/CactusCon_24/k8s-manifests/evilpod.yaml

diff --git a/CactusCon_24/k8s-manifests/ingress.yaml b/archived/CactusCon_24/k8s-manifests/ingress.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/ingress.yaml

rename to archived/CactusCon_24/k8s-manifests/ingress.yaml

diff --git a/CactusCon_24/k8s-manifests/namespaces.yaml b/archived/CactusCon_24/k8s-manifests/namespaces.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/namespaces.yaml

rename to archived/CactusCon_24/k8s-manifests/namespaces.yaml

diff --git a/CactusCon_24/k8s-manifests/nothingallowedpod.yaml b/archived/CactusCon_24/k8s-manifests/nothingallowedpod.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/nothingallowedpod.yaml

rename to archived/CactusCon_24/k8s-manifests/nothingallowedpod.yaml

diff --git a/CactusCon_24/k8s-manifests/pods.yaml b/archived/CactusCon_24/k8s-manifests/pods.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/pods.yaml

rename to archived/CactusCon_24/k8s-manifests/pods.yaml

diff --git a/CactusCon_24/k8s-manifests/rolebindings.yaml b/archived/CactusCon_24/k8s-manifests/rolebindings.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/rolebindings.yaml

rename to archived/CactusCon_24/k8s-manifests/rolebindings.yaml

diff --git a/CactusCon_24/k8s-manifests/roles.yaml b/archived/CactusCon_24/k8s-manifests/roles.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/roles.yaml

rename to archived/CactusCon_24/k8s-manifests/roles.yaml

diff --git a/CactusCon_24/k8s-manifests/secrets.yaml b/archived/CactusCon_24/k8s-manifests/secrets.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/secrets.yaml

rename to archived/CactusCon_24/k8s-manifests/secrets.yaml

diff --git a/CactusCon_24/k8s-manifests/serviceaccounts.yaml b/archived/CactusCon_24/k8s-manifests/serviceaccounts.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/serviceaccounts.yaml

rename to archived/CactusCon_24/k8s-manifests/serviceaccounts.yaml

diff --git a/CactusCon_24/k8s-manifests/services.yaml b/archived/CactusCon_24/k8s-manifests/services.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/services.yaml

rename to archived/CactusCon_24/k8s-manifests/services.yaml

diff --git a/CactusCon_24/kind-lab-config.yaml b/archived/CactusCon_24/kind-lab-config.yaml

similarity index 100%

rename from CactusCon_24/kind-lab-config.yaml

rename to archived/CactusCon_24/kind-lab-config.yaml

diff --git a/CactusCon_24/lab-ansible-setup.yml b/archived/CactusCon_24/lab-ansible-setup.yml

similarity index 100%

rename from CactusCon_24/lab-ansible-setup.yml

rename to archived/CactusCon_24/lab-ansible-setup.yml

diff --git a/CactusCon_24/labs_walk_thru.md b/archived/CactusCon_24/labs_walk_thru.md

similarity index 100%

rename from CactusCon_24/labs_walk_thru.md

rename to archived/CactusCon_24/labs_walk_thru.md

diff --git a/CactusCon_24/scripts/reverse_shell_handler.py b/archived/CactusCon_24/scripts/reverse_shell_handler.py

similarity index 100%

rename from CactusCon_24/scripts/reverse_shell_handler.py

rename to archived/CactusCon_24/scripts/reverse_shell_handler.py

diff --git a/DC30/Defcon_Workshop_Release_1.0.pptx.pdf b/archived/DC30/Defcon_Workshop_Release_1.0.pptx.pdf

similarity index 100%

rename from DC30/Defcon_Workshop_Release_1.0.pptx.pdf

rename to archived/DC30/Defcon_Workshop_Release_1.0.pptx.pdf

diff --git a/DC30/Defcon_Workshop_Release_1.1.with notes.pdf b/archived/DC30/Defcon_Workshop_Release_1.1.with notes.pdf

similarity index 100%

rename from DC30/Defcon_Workshop_Release_1.1.with notes.pdf

rename to archived/DC30/Defcon_Workshop_Release_1.1.with notes.pdf

diff --git a/DC30/Lab Setup.md b/archived/DC30/Lab Setup.md

similarity index 100%

rename from DC30/Lab Setup.md

rename to archived/DC30/Lab Setup.md

diff --git a/DC30/README.md b/archived/DC30/README.md

similarity index 100%

rename from DC30/README.md

rename to archived/DC30/README.md

diff --git a/DC30/cheatsheet.md b/archived/DC30/cheatsheet.md

similarity index 100%

rename from DC30/cheatsheet.md

rename to archived/DC30/cheatsheet.md

diff --git a/DC30/kind-lab-config.yaml b/archived/DC30/kind-lab-config.yaml

similarity index 100%

rename from DC30/kind-lab-config.yaml

rename to archived/DC30/kind-lab-config.yaml

diff --git a/DC30/labs_walk_thru.md b/archived/DC30/labs_walk_thru.md

similarity index 100%

rename from DC30/labs_walk_thru.md

rename to archived/DC30/labs_walk_thru.md

diff --git a/DC31/DC31 Malicious Containers Workshop1.1.pdf b/archived/DC31/DC31 Malicious Containers Workshop1.1.pdf

similarity index 100%

rename from DC31/DC31 Malicious Containers Workshop1.1.pdf

rename to archived/DC31/DC31 Malicious Containers Workshop1.1.pdf

diff --git a/DC31/Lab Setup.md b/archived/DC31/Lab Setup.md

similarity index 100%

rename from DC31/Lab Setup.md

rename to archived/DC31/Lab Setup.md

diff --git a/DC31/README.md b/archived/DC31/README.md

similarity index 100%

rename from DC31/README.md

rename to archived/DC31/README.md

diff --git a/DC31/cheatsheet.md b/archived/DC31/cheatsheet.md

similarity index 100%

rename from DC31/cheatsheet.md

rename to archived/DC31/cheatsheet.md

diff --git a/DC31/grafana/tracee-dashboard.json b/archived/DC31/grafana/tracee-dashboard.json

similarity index 100%

rename from DC31/grafana/tracee-dashboard.json

rename to archived/DC31/grafana/tracee-dashboard.json

diff --git a/DC31/helm-config/grafana-config.yaml b/archived/DC31/helm-config/grafana-config.yaml

similarity index 100%

rename from DC31/helm-config/grafana-config.yaml

rename to archived/DC31/helm-config/grafana-config.yaml

diff --git a/DC31/helm-config/promtail-config.yaml b/archived/DC31/helm-config/promtail-config.yaml

similarity index 100%

rename from DC31/helm-config/promtail-config.yaml

rename to archived/DC31/helm-config/promtail-config.yaml

diff --git a/DC31/k8s-ansible-setup.yml b/archived/DC31/k8s-ansible-setup.yml

similarity index 100%

rename from DC31/k8s-ansible-setup.yml

rename to archived/DC31/k8s-ansible-setup.yml

diff --git a/DC31/k8s-manifests/clusterrolebindings.yaml b/archived/DC31/k8s-manifests/clusterrolebindings.yaml

similarity index 100%

rename from DC31/k8s-manifests/clusterrolebindings.yaml

rename to archived/DC31/k8s-manifests/clusterrolebindings.yaml

diff --git a/DC31/k8s-manifests/clusterroles.yaml b/archived/DC31/k8s-manifests/clusterroles.yaml

similarity index 100%

rename from DC31/k8s-manifests/clusterroles.yaml

rename to archived/DC31/k8s-manifests/clusterroles.yaml

diff --git a/DC31/k8s-manifests/configmaps.yaml b/archived/DC31/k8s-manifests/configmaps.yaml

similarity index 100%

rename from DC31/k8s-manifests/configmaps.yaml

rename to archived/DC31/k8s-manifests/configmaps.yaml

diff --git a/DC31/k8s-manifests/daemonsets.yaml b/archived/DC31/k8s-manifests/daemonsets.yaml

similarity index 100%

rename from DC31/k8s-manifests/daemonsets.yaml

rename to archived/DC31/k8s-manifests/daemonsets.yaml

diff --git a/DC31/k8s-manifests/deployments.yaml b/archived/DC31/k8s-manifests/deployments.yaml

similarity index 100%

rename from DC31/k8s-manifests/deployments.yaml

rename to archived/DC31/k8s-manifests/deployments.yaml

diff --git a/DC31/k8s-manifests/evilpod.yaml b/archived/DC31/k8s-manifests/evilpod.yaml

similarity index 100%

rename from DC31/k8s-manifests/evilpod.yaml

rename to archived/DC31/k8s-manifests/evilpod.yaml

diff --git a/DC31/k8s-manifests/ingress.yaml b/archived/DC31/k8s-manifests/ingress.yaml

similarity index 100%

rename from DC31/k8s-manifests/ingress.yaml

rename to archived/DC31/k8s-manifests/ingress.yaml

diff --git a/DC31/k8s-manifests/namespaces.yaml b/archived/DC31/k8s-manifests/namespaces.yaml

similarity index 100%

rename from DC31/k8s-manifests/namespaces.yaml

rename to archived/DC31/k8s-manifests/namespaces.yaml

diff --git a/DC31/k8s-manifests/nothingallowedpod.yaml b/archived/DC31/k8s-manifests/nothingallowedpod.yaml

similarity index 100%

rename from DC31/k8s-manifests/nothingallowedpod.yaml

rename to archived/DC31/k8s-manifests/nothingallowedpod.yaml

diff --git a/DC31/k8s-manifests/pods.yaml b/archived/DC31/k8s-manifests/pods.yaml

similarity index 100%

rename from DC31/k8s-manifests/pods.yaml

rename to archived/DC31/k8s-manifests/pods.yaml

diff --git a/DC31/k8s-manifests/rolebindings.yaml b/archived/DC31/k8s-manifests/rolebindings.yaml

similarity index 100%

rename from DC31/k8s-manifests/rolebindings.yaml

rename to archived/DC31/k8s-manifests/rolebindings.yaml

diff --git a/DC31/k8s-manifests/roles.yaml b/archived/DC31/k8s-manifests/roles.yaml

similarity index 100%

rename from DC31/k8s-manifests/roles.yaml

rename to archived/DC31/k8s-manifests/roles.yaml

diff --git a/DC31/k8s-manifests/secrets.yaml b/archived/DC31/k8s-manifests/secrets.yaml

similarity index 100%

rename from DC31/k8s-manifests/secrets.yaml

rename to archived/DC31/k8s-manifests/secrets.yaml

diff --git a/DC31/k8s-manifests/serviceaccounts.yaml b/archived/DC31/k8s-manifests/serviceaccounts.yaml

similarity index 100%

rename from DC31/k8s-manifests/serviceaccounts.yaml

rename to archived/DC31/k8s-manifests/serviceaccounts.yaml

diff --git a/DC31/k8s-manifests/services.yaml b/archived/DC31/k8s-manifests/services.yaml

similarity index 100%

rename from DC31/k8s-manifests/services.yaml

rename to archived/DC31/k8s-manifests/services.yaml

diff --git a/DC31/kind-lab-config.yaml b/archived/DC31/kind-lab-config.yaml

similarity index 100%

rename from DC31/kind-lab-config.yaml

rename to archived/DC31/kind-lab-config.yaml

diff --git a/DC31/lab-ansible-setup.yml b/archived/DC31/lab-ansible-setup.yml

similarity index 100%

rename from DC31/lab-ansible-setup.yml

rename to archived/DC31/lab-ansible-setup.yml

diff --git a/DC31/labs_walk_thru.md b/archived/DC31/labs_walk_thru.md

similarity index 100%

rename from DC31/labs_walk_thru.md

rename to archived/DC31/labs_walk_thru.md

diff --git a/ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf b/archived/ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf

similarity index 100%

rename from ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf

rename to archived/ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf

diff --git a/ISSA_Triad_23/Lab Setup.md b/archived/ISSA_Triad_23/Lab Setup.md

similarity index 100%

rename from ISSA_Triad_23/Lab Setup.md

rename to archived/ISSA_Triad_23/Lab Setup.md

diff --git a/ISSA_Triad_23/cheatsheet.md b/archived/ISSA_Triad_23/cheatsheet.md

similarity index 100%

rename from ISSA_Triad_23/cheatsheet.md

rename to archived/ISSA_Triad_23/cheatsheet.md

diff --git a/ISSA_Triad_23/kind-lab-config.yaml b/archived/ISSA_Triad_23/kind-lab-config.yaml

similarity index 100%

rename from ISSA_Triad_23/kind-lab-config.yaml

rename to archived/ISSA_Triad_23/kind-lab-config.yaml

diff --git a/ISSA_Triad_23/labs_walk_thru.md b/archived/ISSA_Triad_23/labs_walk_thru.md

similarity index 100%

rename from ISSA_Triad_23/labs_walk_thru.md

rename to archived/ISSA_Triad_23/labs_walk_thru.md

diff --git a/ISSA_Triad_23/readme.me b/archived/ISSA_Triad_23/readme.me

similarity index 100%

rename from ISSA_Triad_23/readme.me

rename to archived/ISSA_Triad_23/readme.me

diff --git a/current/CactusCon'24 Malicious Containers Workshop.pdf b/current/CactusCon'24 Malicious Containers Workshop.pdf

new file mode 100644

index 0000000..0f92898

Binary files /dev/null and b/current/CactusCon'24 Malicious Containers Workshop.pdf differ

diff --git a/current/README.md b/current/README.md

new file mode 100644

index 0000000..9927d64

--- /dev/null

+++ b/current/README.md

@@ -0,0 +1,50 @@

+# Malicious Kubernetes Workshop

+

+This directory contains the current version of the Malicious Kubernetes workshop materials. The workshop is an introduction to Kubernetes and container security — covering cluster deployment, offensive container techniques, privilege escalation, supply chain security, and runtime detection with modern eBPF-based tools.

+

+

+

+## Quick Start

+

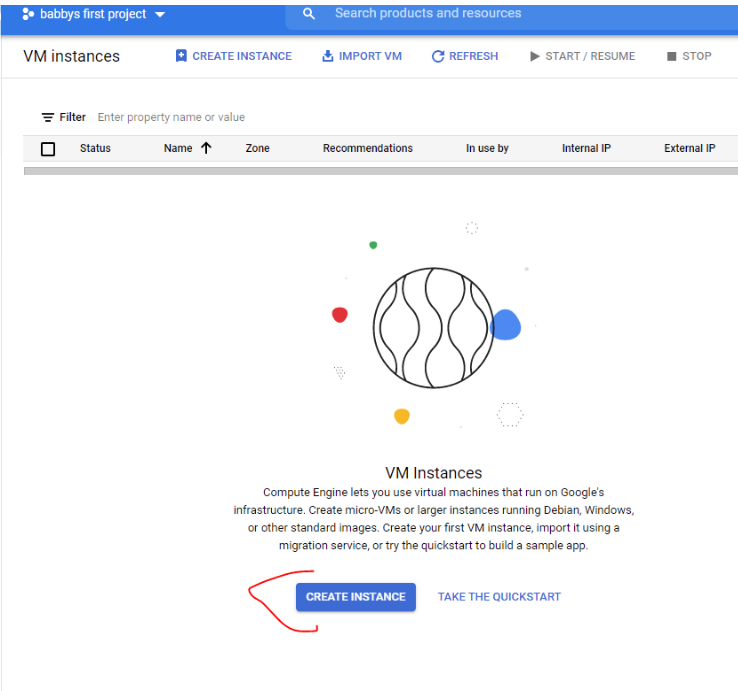

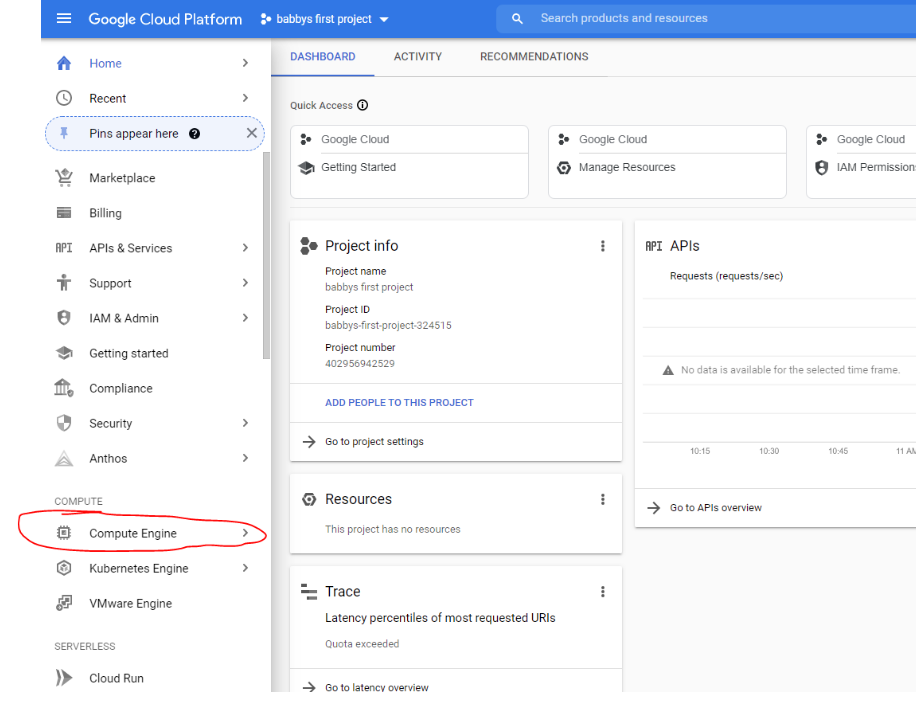

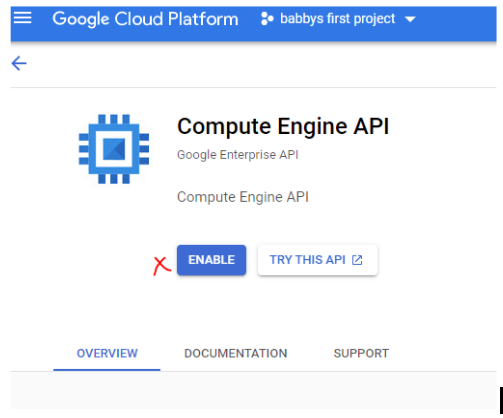

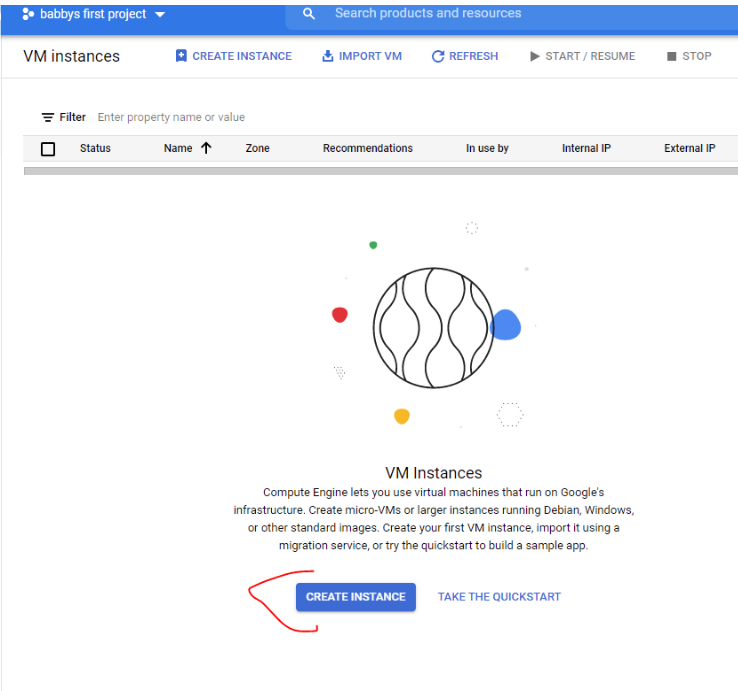

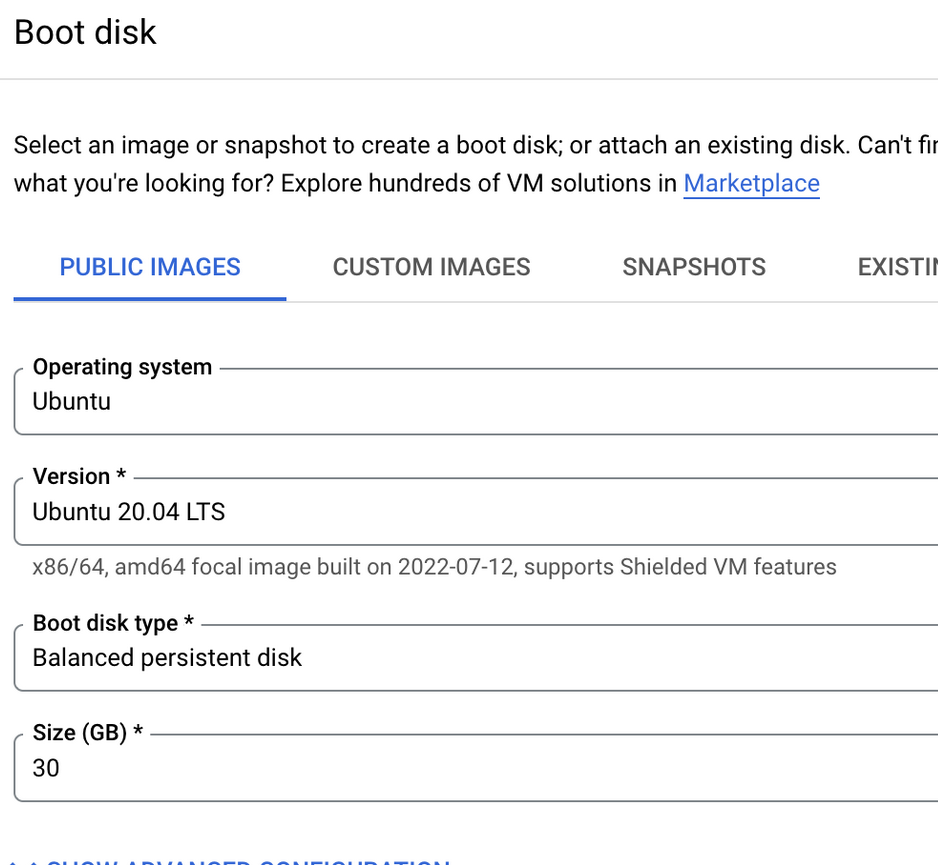

+1. **[Lab Setup](lab-setup.md)** — Environment setup (GCP VM, tooling, kind cluster)

+2. **[Lab Walk-Through](labs_walk_thru.md)** — Step-by-step lab instructions for all modules

+3. **[Cheat Sheet](cheatsheet.md)** — Troubleshooting and quick reference

+

+## What's Covered

+

+| Module | Topic |

+|--------|-------|

+| 1 | Docker fundamentals |

+| 2 | Exploring container images & reverse engineering |

+| 3 | Offensive Docker techniques (exfil, socket hijacking, persistence) |

+| 4 | Container incident response CTF |

+| 5 | Kubernetes 101 |

+| 6 | Kubernetes security — RBAC abuse, priv esc, golden tickets, evil pods |

+| 7 | Supply chain security — cosign, syft, grype, image provenance |

+| 8 | Modern runtime security — Tracee, Falco, Tetragon |

+| 9 | Cloud-native & managed K8s attacks — IMDS, workload identity, network policy bypass |

+

+## Tools Used

+

+- **Infrastructure**: kind, kubectl (v1.31), Helm v3, Ansible

+- **Observability**: Prometheus, Grafana, Loki, Promtail

+- **Runtime Security**: Tracee, Falco (+Falcosidekick), Tetragon

+- **Supply Chain**: cosign, crane, syft, grype

+- **Offensive**: ngrok, openssl, nmap, socat

+

+## Presenters

+

+### Instructor: David Mitchell

+

\

-> https://keybase.io/threlfall

+> [@digish0](https://twitter.com/digish0) | https://digital-shokunin.net

+### Instructor: Adrian Wood

+> [@whitehacksec](https://twitter.com/WHITEHACKSEC) | https://5stars217.github.io

## Our lockpick/hacker(space) group

-[](https://github.com/lockFALE/)

+[](https://github.com/lockFALE/)

+

+## License

+

+See [LICENSE](LICENSE).

diff --git a/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf b/archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf

similarity index 100%

rename from BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf

rename to archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1 with notes.pdf

diff --git a/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf b/archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf

similarity index 100%

rename from BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf

rename to archived/BSides_Charleston_23/BSidesCHS23 Malicious Containers Workshop1.1.pdf

diff --git a/BSides_Charleston_23/Lab Setup.md b/archived/BSides_Charleston_23/Lab Setup.md

similarity index 100%

rename from BSides_Charleston_23/Lab Setup.md

rename to archived/BSides_Charleston_23/Lab Setup.md

diff --git a/BSides_Charleston_23/README.md b/archived/BSides_Charleston_23/README.md

similarity index 100%

rename from BSides_Charleston_23/README.md

rename to archived/BSides_Charleston_23/README.md

diff --git a/BSides_Charleston_23/cheatsheet.md b/archived/BSides_Charleston_23/cheatsheet.md

similarity index 100%

rename from BSides_Charleston_23/cheatsheet.md

rename to archived/BSides_Charleston_23/cheatsheet.md

diff --git a/BSides_Charleston_23/grafana/tracee-dashboard.json b/archived/BSides_Charleston_23/grafana/tracee-dashboard.json

similarity index 100%

rename from BSides_Charleston_23/grafana/tracee-dashboard.json

rename to archived/BSides_Charleston_23/grafana/tracee-dashboard.json

diff --git a/BSides_Charleston_23/helm-config/grafana-config.yaml b/archived/BSides_Charleston_23/helm-config/grafana-config.yaml

similarity index 100%

rename from BSides_Charleston_23/helm-config/grafana-config.yaml

rename to archived/BSides_Charleston_23/helm-config/grafana-config.yaml

diff --git a/BSides_Charleston_23/helm-config/promtail-config.yaml b/archived/BSides_Charleston_23/helm-config/promtail-config.yaml

similarity index 100%

rename from BSides_Charleston_23/helm-config/promtail-config.yaml

rename to archived/BSides_Charleston_23/helm-config/promtail-config.yaml

diff --git a/BSides_Charleston_23/image.png b/archived/BSides_Charleston_23/image.png

similarity index 100%

rename from BSides_Charleston_23/image.png

rename to archived/BSides_Charleston_23/image.png

diff --git a/BSides_Charleston_23/k8s-ansible-setup.yml b/archived/BSides_Charleston_23/k8s-ansible-setup.yml

similarity index 100%

rename from BSides_Charleston_23/k8s-ansible-setup.yml

rename to archived/BSides_Charleston_23/k8s-ansible-setup.yml

diff --git a/BSides_Charleston_23/k8s-manifests/attacker-pod.yaml b/archived/BSides_Charleston_23/k8s-manifests/attacker-pod.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/attacker-pod.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/attacker-pod.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml b/archived/BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/clusterrolebindings.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/clusterroles.yaml b/archived/BSides_Charleston_23/k8s-manifests/clusterroles.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/clusterroles.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/clusterroles.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/configmaps.yaml b/archived/BSides_Charleston_23/k8s-manifests/configmaps.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/configmaps.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/configmaps.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/daemonsets.yaml b/archived/BSides_Charleston_23/k8s-manifests/daemonsets.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/daemonsets.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/daemonsets.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/deployments.yaml b/archived/BSides_Charleston_23/k8s-manifests/deployments.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/deployments.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/deployments.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/evilpod.yaml b/archived/BSides_Charleston_23/k8s-manifests/evilpod.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/evilpod.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/evilpod.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/ingress.yaml b/archived/BSides_Charleston_23/k8s-manifests/ingress.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/ingress.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/ingress.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/namespaces.yaml b/archived/BSides_Charleston_23/k8s-manifests/namespaces.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/namespaces.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/namespaces.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml b/archived/BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/nothingallowedpod.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/pods.yaml b/archived/BSides_Charleston_23/k8s-manifests/pods.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/pods.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/pods.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/rolebindings.yaml b/archived/BSides_Charleston_23/k8s-manifests/rolebindings.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/rolebindings.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/rolebindings.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/roles.yaml b/archived/BSides_Charleston_23/k8s-manifests/roles.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/roles.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/roles.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/secrets.yaml b/archived/BSides_Charleston_23/k8s-manifests/secrets.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/secrets.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/secrets.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml b/archived/BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/serviceaccounts.yaml

diff --git a/BSides_Charleston_23/k8s-manifests/services.yaml b/archived/BSides_Charleston_23/k8s-manifests/services.yaml

similarity index 100%

rename from BSides_Charleston_23/k8s-manifests/services.yaml

rename to archived/BSides_Charleston_23/k8s-manifests/services.yaml

diff --git a/BSides_Charleston_23/kind-lab-config.yaml b/archived/BSides_Charleston_23/kind-lab-config.yaml

similarity index 100%

rename from BSides_Charleston_23/kind-lab-config.yaml

rename to archived/BSides_Charleston_23/kind-lab-config.yaml

diff --git a/BSides_Charleston_23/lab-ansible-setup.yml b/archived/BSides_Charleston_23/lab-ansible-setup.yml

similarity index 100%

rename from BSides_Charleston_23/lab-ansible-setup.yml

rename to archived/BSides_Charleston_23/lab-ansible-setup.yml

diff --git a/BSides_Charleston_23/labs_walk_thru.md b/archived/BSides_Charleston_23/labs_walk_thru.md

similarity index 100%

rename from BSides_Charleston_23/labs_walk_thru.md

rename to archived/BSides_Charleston_23/labs_walk_thru.md

diff --git a/BSides_Charleston_23/scripts/reverse_shell_handler.py b/archived/BSides_Charleston_23/scripts/reverse_shell_handler.py

similarity index 100%

rename from BSides_Charleston_23/scripts/reverse_shell_handler.py

rename to archived/BSides_Charleston_23/scripts/reverse_shell_handler.py

diff --git a/Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf b/archived/Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf

similarity index 100%

rename from Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf

rename to archived/Bsides_Charleston_22/Bsides_Workshop_Pre_Release_1.3.pptx.pdf

diff --git a/Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf b/archived/Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf

similarity index 100%

rename from Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf

rename to archived/Bsides_Charleston_22/Incident Response in containerized and ephemeral environments.pdf

diff --git a/Bsides_Charleston_22/Lab Setup.md b/archived/Bsides_Charleston_22/Lab Setup.md

similarity index 100%

rename from Bsides_Charleston_22/Lab Setup.md

rename to archived/Bsides_Charleston_22/Lab Setup.md

diff --git a/Bsides_Charleston_22/cheatsheet.md b/archived/Bsides_Charleston_22/cheatsheet.md

similarity index 100%

rename from Bsides_Charleston_22/cheatsheet.md

rename to archived/Bsides_Charleston_22/cheatsheet.md

diff --git a/Bsides_Charleston_22/kind-lab-config.yaml b/archived/Bsides_Charleston_22/kind-lab-config.yaml

similarity index 100%

rename from Bsides_Charleston_22/kind-lab-config.yaml

rename to archived/Bsides_Charleston_22/kind-lab-config.yaml

diff --git a/Bsides_Charleston_22/labs_walk_thru.md b/archived/Bsides_Charleston_22/labs_walk_thru.md

similarity index 100%

rename from Bsides_Charleston_22/labs_walk_thru.md

rename to archived/Bsides_Charleston_22/labs_walk_thru.md

diff --git a/Bsides_Charleston_22/readme.md b/archived/Bsides_Charleston_22/readme.md

similarity index 100%

rename from Bsides_Charleston_22/readme.md

rename to archived/Bsides_Charleston_22/readme.md

diff --git a/CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf b/archived/CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf

similarity index 100%

rename from CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf

rename to archived/CactusCon_24/CactusCon'24 Malicious Containers Workshop.pdf

diff --git a/CactusCon_24/Lab Setup.md b/archived/CactusCon_24/Lab Setup.md

similarity index 100%

rename from CactusCon_24/Lab Setup.md

rename to archived/CactusCon_24/Lab Setup.md

diff --git a/CactusCon_24/README.md b/archived/CactusCon_24/README.md

similarity index 100%

rename from CactusCon_24/README.md

rename to archived/CactusCon_24/README.md

diff --git a/CactusCon_24/cheatsheet.md b/archived/CactusCon_24/cheatsheet.md

similarity index 100%

rename from CactusCon_24/cheatsheet.md

rename to archived/CactusCon_24/cheatsheet.md

diff --git a/CactusCon_24/grafana/tracee-dashboard.json b/archived/CactusCon_24/grafana/tracee-dashboard.json

similarity index 100%

rename from CactusCon_24/grafana/tracee-dashboard.json

rename to archived/CactusCon_24/grafana/tracee-dashboard.json

diff --git a/CactusCon_24/helm-config/grafana-config.yaml b/archived/CactusCon_24/helm-config/grafana-config.yaml

similarity index 100%

rename from CactusCon_24/helm-config/grafana-config.yaml

rename to archived/CactusCon_24/helm-config/grafana-config.yaml

diff --git a/CactusCon_24/helm-config/promtail-config.yaml b/archived/CactusCon_24/helm-config/promtail-config.yaml

similarity index 100%

rename from CactusCon_24/helm-config/promtail-config.yaml

rename to archived/CactusCon_24/helm-config/promtail-config.yaml

diff --git a/CactusCon_24/image.png b/archived/CactusCon_24/image.png

similarity index 100%

rename from CactusCon_24/image.png

rename to archived/CactusCon_24/image.png

diff --git a/CactusCon_24/k8s-ansible-setup.yml b/archived/CactusCon_24/k8s-ansible-setup.yml

similarity index 100%

rename from CactusCon_24/k8s-ansible-setup.yml

rename to archived/CactusCon_24/k8s-ansible-setup.yml

diff --git a/CactusCon_24/k8s-manifests/attacker-pod.yaml b/archived/CactusCon_24/k8s-manifests/attacker-pod.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/attacker-pod.yaml

rename to archived/CactusCon_24/k8s-manifests/attacker-pod.yaml

diff --git a/CactusCon_24/k8s-manifests/clusterrolebindings.yaml b/archived/CactusCon_24/k8s-manifests/clusterrolebindings.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/clusterrolebindings.yaml

rename to archived/CactusCon_24/k8s-manifests/clusterrolebindings.yaml

diff --git a/CactusCon_24/k8s-manifests/clusterroles.yaml b/archived/CactusCon_24/k8s-manifests/clusterroles.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/clusterroles.yaml

rename to archived/CactusCon_24/k8s-manifests/clusterroles.yaml

diff --git a/CactusCon_24/k8s-manifests/configmaps.yaml b/archived/CactusCon_24/k8s-manifests/configmaps.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/configmaps.yaml

rename to archived/CactusCon_24/k8s-manifests/configmaps.yaml

diff --git a/CactusCon_24/k8s-manifests/daemonsets.yaml b/archived/CactusCon_24/k8s-manifests/daemonsets.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/daemonsets.yaml

rename to archived/CactusCon_24/k8s-manifests/daemonsets.yaml

diff --git a/CactusCon_24/k8s-manifests/deployments.yaml b/archived/CactusCon_24/k8s-manifests/deployments.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/deployments.yaml

rename to archived/CactusCon_24/k8s-manifests/deployments.yaml

diff --git a/CactusCon_24/k8s-manifests/evilpod.yaml b/archived/CactusCon_24/k8s-manifests/evilpod.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/evilpod.yaml

rename to archived/CactusCon_24/k8s-manifests/evilpod.yaml

diff --git a/CactusCon_24/k8s-manifests/ingress.yaml b/archived/CactusCon_24/k8s-manifests/ingress.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/ingress.yaml

rename to archived/CactusCon_24/k8s-manifests/ingress.yaml

diff --git a/CactusCon_24/k8s-manifests/namespaces.yaml b/archived/CactusCon_24/k8s-manifests/namespaces.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/namespaces.yaml

rename to archived/CactusCon_24/k8s-manifests/namespaces.yaml

diff --git a/CactusCon_24/k8s-manifests/nothingallowedpod.yaml b/archived/CactusCon_24/k8s-manifests/nothingallowedpod.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/nothingallowedpod.yaml

rename to archived/CactusCon_24/k8s-manifests/nothingallowedpod.yaml

diff --git a/CactusCon_24/k8s-manifests/pods.yaml b/archived/CactusCon_24/k8s-manifests/pods.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/pods.yaml

rename to archived/CactusCon_24/k8s-manifests/pods.yaml

diff --git a/CactusCon_24/k8s-manifests/rolebindings.yaml b/archived/CactusCon_24/k8s-manifests/rolebindings.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/rolebindings.yaml

rename to archived/CactusCon_24/k8s-manifests/rolebindings.yaml

diff --git a/CactusCon_24/k8s-manifests/roles.yaml b/archived/CactusCon_24/k8s-manifests/roles.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/roles.yaml

rename to archived/CactusCon_24/k8s-manifests/roles.yaml

diff --git a/CactusCon_24/k8s-manifests/secrets.yaml b/archived/CactusCon_24/k8s-manifests/secrets.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/secrets.yaml

rename to archived/CactusCon_24/k8s-manifests/secrets.yaml

diff --git a/CactusCon_24/k8s-manifests/serviceaccounts.yaml b/archived/CactusCon_24/k8s-manifests/serviceaccounts.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/serviceaccounts.yaml

rename to archived/CactusCon_24/k8s-manifests/serviceaccounts.yaml

diff --git a/CactusCon_24/k8s-manifests/services.yaml b/archived/CactusCon_24/k8s-manifests/services.yaml

similarity index 100%

rename from CactusCon_24/k8s-manifests/services.yaml

rename to archived/CactusCon_24/k8s-manifests/services.yaml

diff --git a/CactusCon_24/kind-lab-config.yaml b/archived/CactusCon_24/kind-lab-config.yaml

similarity index 100%

rename from CactusCon_24/kind-lab-config.yaml

rename to archived/CactusCon_24/kind-lab-config.yaml

diff --git a/CactusCon_24/lab-ansible-setup.yml b/archived/CactusCon_24/lab-ansible-setup.yml

similarity index 100%

rename from CactusCon_24/lab-ansible-setup.yml

rename to archived/CactusCon_24/lab-ansible-setup.yml

diff --git a/CactusCon_24/labs_walk_thru.md b/archived/CactusCon_24/labs_walk_thru.md

similarity index 100%

rename from CactusCon_24/labs_walk_thru.md

rename to archived/CactusCon_24/labs_walk_thru.md

diff --git a/CactusCon_24/scripts/reverse_shell_handler.py b/archived/CactusCon_24/scripts/reverse_shell_handler.py

similarity index 100%

rename from CactusCon_24/scripts/reverse_shell_handler.py

rename to archived/CactusCon_24/scripts/reverse_shell_handler.py

diff --git a/DC30/Defcon_Workshop_Release_1.0.pptx.pdf b/archived/DC30/Defcon_Workshop_Release_1.0.pptx.pdf

similarity index 100%

rename from DC30/Defcon_Workshop_Release_1.0.pptx.pdf

rename to archived/DC30/Defcon_Workshop_Release_1.0.pptx.pdf

diff --git a/DC30/Defcon_Workshop_Release_1.1.with notes.pdf b/archived/DC30/Defcon_Workshop_Release_1.1.with notes.pdf

similarity index 100%

rename from DC30/Defcon_Workshop_Release_1.1.with notes.pdf

rename to archived/DC30/Defcon_Workshop_Release_1.1.with notes.pdf

diff --git a/DC30/Lab Setup.md b/archived/DC30/Lab Setup.md

similarity index 100%

rename from DC30/Lab Setup.md

rename to archived/DC30/Lab Setup.md

diff --git a/DC30/README.md b/archived/DC30/README.md

similarity index 100%

rename from DC30/README.md

rename to archived/DC30/README.md

diff --git a/DC30/cheatsheet.md b/archived/DC30/cheatsheet.md

similarity index 100%

rename from DC30/cheatsheet.md

rename to archived/DC30/cheatsheet.md

diff --git a/DC30/kind-lab-config.yaml b/archived/DC30/kind-lab-config.yaml

similarity index 100%

rename from DC30/kind-lab-config.yaml

rename to archived/DC30/kind-lab-config.yaml

diff --git a/DC30/labs_walk_thru.md b/archived/DC30/labs_walk_thru.md

similarity index 100%

rename from DC30/labs_walk_thru.md

rename to archived/DC30/labs_walk_thru.md

diff --git a/DC31/DC31 Malicious Containers Workshop1.1.pdf b/archived/DC31/DC31 Malicious Containers Workshop1.1.pdf

similarity index 100%

rename from DC31/DC31 Malicious Containers Workshop1.1.pdf

rename to archived/DC31/DC31 Malicious Containers Workshop1.1.pdf

diff --git a/DC31/Lab Setup.md b/archived/DC31/Lab Setup.md

similarity index 100%

rename from DC31/Lab Setup.md

rename to archived/DC31/Lab Setup.md

diff --git a/DC31/README.md b/archived/DC31/README.md

similarity index 100%

rename from DC31/README.md

rename to archived/DC31/README.md

diff --git a/DC31/cheatsheet.md b/archived/DC31/cheatsheet.md

similarity index 100%

rename from DC31/cheatsheet.md

rename to archived/DC31/cheatsheet.md

diff --git a/DC31/grafana/tracee-dashboard.json b/archived/DC31/grafana/tracee-dashboard.json

similarity index 100%

rename from DC31/grafana/tracee-dashboard.json

rename to archived/DC31/grafana/tracee-dashboard.json

diff --git a/DC31/helm-config/grafana-config.yaml b/archived/DC31/helm-config/grafana-config.yaml

similarity index 100%

rename from DC31/helm-config/grafana-config.yaml

rename to archived/DC31/helm-config/grafana-config.yaml

diff --git a/DC31/helm-config/promtail-config.yaml b/archived/DC31/helm-config/promtail-config.yaml

similarity index 100%

rename from DC31/helm-config/promtail-config.yaml

rename to archived/DC31/helm-config/promtail-config.yaml

diff --git a/DC31/k8s-ansible-setup.yml b/archived/DC31/k8s-ansible-setup.yml

similarity index 100%

rename from DC31/k8s-ansible-setup.yml

rename to archived/DC31/k8s-ansible-setup.yml

diff --git a/DC31/k8s-manifests/clusterrolebindings.yaml b/archived/DC31/k8s-manifests/clusterrolebindings.yaml

similarity index 100%

rename from DC31/k8s-manifests/clusterrolebindings.yaml

rename to archived/DC31/k8s-manifests/clusterrolebindings.yaml

diff --git a/DC31/k8s-manifests/clusterroles.yaml b/archived/DC31/k8s-manifests/clusterroles.yaml

similarity index 100%

rename from DC31/k8s-manifests/clusterroles.yaml

rename to archived/DC31/k8s-manifests/clusterroles.yaml

diff --git a/DC31/k8s-manifests/configmaps.yaml b/archived/DC31/k8s-manifests/configmaps.yaml

similarity index 100%

rename from DC31/k8s-manifests/configmaps.yaml

rename to archived/DC31/k8s-manifests/configmaps.yaml

diff --git a/DC31/k8s-manifests/daemonsets.yaml b/archived/DC31/k8s-manifests/daemonsets.yaml

similarity index 100%

rename from DC31/k8s-manifests/daemonsets.yaml

rename to archived/DC31/k8s-manifests/daemonsets.yaml

diff --git a/DC31/k8s-manifests/deployments.yaml b/archived/DC31/k8s-manifests/deployments.yaml

similarity index 100%

rename from DC31/k8s-manifests/deployments.yaml

rename to archived/DC31/k8s-manifests/deployments.yaml

diff --git a/DC31/k8s-manifests/evilpod.yaml b/archived/DC31/k8s-manifests/evilpod.yaml

similarity index 100%

rename from DC31/k8s-manifests/evilpod.yaml

rename to archived/DC31/k8s-manifests/evilpod.yaml

diff --git a/DC31/k8s-manifests/ingress.yaml b/archived/DC31/k8s-manifests/ingress.yaml

similarity index 100%

rename from DC31/k8s-manifests/ingress.yaml

rename to archived/DC31/k8s-manifests/ingress.yaml

diff --git a/DC31/k8s-manifests/namespaces.yaml b/archived/DC31/k8s-manifests/namespaces.yaml

similarity index 100%

rename from DC31/k8s-manifests/namespaces.yaml

rename to archived/DC31/k8s-manifests/namespaces.yaml

diff --git a/DC31/k8s-manifests/nothingallowedpod.yaml b/archived/DC31/k8s-manifests/nothingallowedpod.yaml

similarity index 100%

rename from DC31/k8s-manifests/nothingallowedpod.yaml

rename to archived/DC31/k8s-manifests/nothingallowedpod.yaml

diff --git a/DC31/k8s-manifests/pods.yaml b/archived/DC31/k8s-manifests/pods.yaml

similarity index 100%

rename from DC31/k8s-manifests/pods.yaml

rename to archived/DC31/k8s-manifests/pods.yaml

diff --git a/DC31/k8s-manifests/rolebindings.yaml b/archived/DC31/k8s-manifests/rolebindings.yaml

similarity index 100%

rename from DC31/k8s-manifests/rolebindings.yaml

rename to archived/DC31/k8s-manifests/rolebindings.yaml

diff --git a/DC31/k8s-manifests/roles.yaml b/archived/DC31/k8s-manifests/roles.yaml

similarity index 100%

rename from DC31/k8s-manifests/roles.yaml

rename to archived/DC31/k8s-manifests/roles.yaml

diff --git a/DC31/k8s-manifests/secrets.yaml b/archived/DC31/k8s-manifests/secrets.yaml

similarity index 100%

rename from DC31/k8s-manifests/secrets.yaml

rename to archived/DC31/k8s-manifests/secrets.yaml

diff --git a/DC31/k8s-manifests/serviceaccounts.yaml b/archived/DC31/k8s-manifests/serviceaccounts.yaml

similarity index 100%

rename from DC31/k8s-manifests/serviceaccounts.yaml

rename to archived/DC31/k8s-manifests/serviceaccounts.yaml

diff --git a/DC31/k8s-manifests/services.yaml b/archived/DC31/k8s-manifests/services.yaml

similarity index 100%

rename from DC31/k8s-manifests/services.yaml

rename to archived/DC31/k8s-manifests/services.yaml

diff --git a/DC31/kind-lab-config.yaml b/archived/DC31/kind-lab-config.yaml

similarity index 100%

rename from DC31/kind-lab-config.yaml

rename to archived/DC31/kind-lab-config.yaml

diff --git a/DC31/lab-ansible-setup.yml b/archived/DC31/lab-ansible-setup.yml

similarity index 100%

rename from DC31/lab-ansible-setup.yml

rename to archived/DC31/lab-ansible-setup.yml

diff --git a/DC31/labs_walk_thru.md b/archived/DC31/labs_walk_thru.md

similarity index 100%

rename from DC31/labs_walk_thru.md

rename to archived/DC31/labs_walk_thru.md

diff --git a/ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf b/archived/ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf

similarity index 100%

rename from ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf

rename to archived/ISSA_Triad_23/ISSA_Workshop_Pre_Release_1.3.pptx.pdf

diff --git a/ISSA_Triad_23/Lab Setup.md b/archived/ISSA_Triad_23/Lab Setup.md

similarity index 100%

rename from ISSA_Triad_23/Lab Setup.md

rename to archived/ISSA_Triad_23/Lab Setup.md

diff --git a/ISSA_Triad_23/cheatsheet.md b/archived/ISSA_Triad_23/cheatsheet.md

similarity index 100%

rename from ISSA_Triad_23/cheatsheet.md

rename to archived/ISSA_Triad_23/cheatsheet.md

diff --git a/ISSA_Triad_23/kind-lab-config.yaml b/archived/ISSA_Triad_23/kind-lab-config.yaml

similarity index 100%

rename from ISSA_Triad_23/kind-lab-config.yaml

rename to archived/ISSA_Triad_23/kind-lab-config.yaml

diff --git a/ISSA_Triad_23/labs_walk_thru.md b/archived/ISSA_Triad_23/labs_walk_thru.md

similarity index 100%

rename from ISSA_Triad_23/labs_walk_thru.md

rename to archived/ISSA_Triad_23/labs_walk_thru.md

diff --git a/ISSA_Triad_23/readme.me b/archived/ISSA_Triad_23/readme.me

similarity index 100%

rename from ISSA_Triad_23/readme.me

rename to archived/ISSA_Triad_23/readme.me

diff --git a/current/CactusCon'24 Malicious Containers Workshop.pdf b/current/CactusCon'24 Malicious Containers Workshop.pdf

new file mode 100644

index 0000000..0f92898

Binary files /dev/null and b/current/CactusCon'24 Malicious Containers Workshop.pdf differ

diff --git a/current/README.md b/current/README.md

new file mode 100644

index 0000000..9927d64

--- /dev/null

+++ b/current/README.md

@@ -0,0 +1,50 @@

+# Malicious Kubernetes Workshop

+

+This directory contains the current version of the Malicious Kubernetes workshop materials. The workshop is an introduction to Kubernetes and container security — covering cluster deployment, offensive container techniques, privilege escalation, supply chain security, and runtime detection with modern eBPF-based tools.

+

+

+

+## Quick Start

+

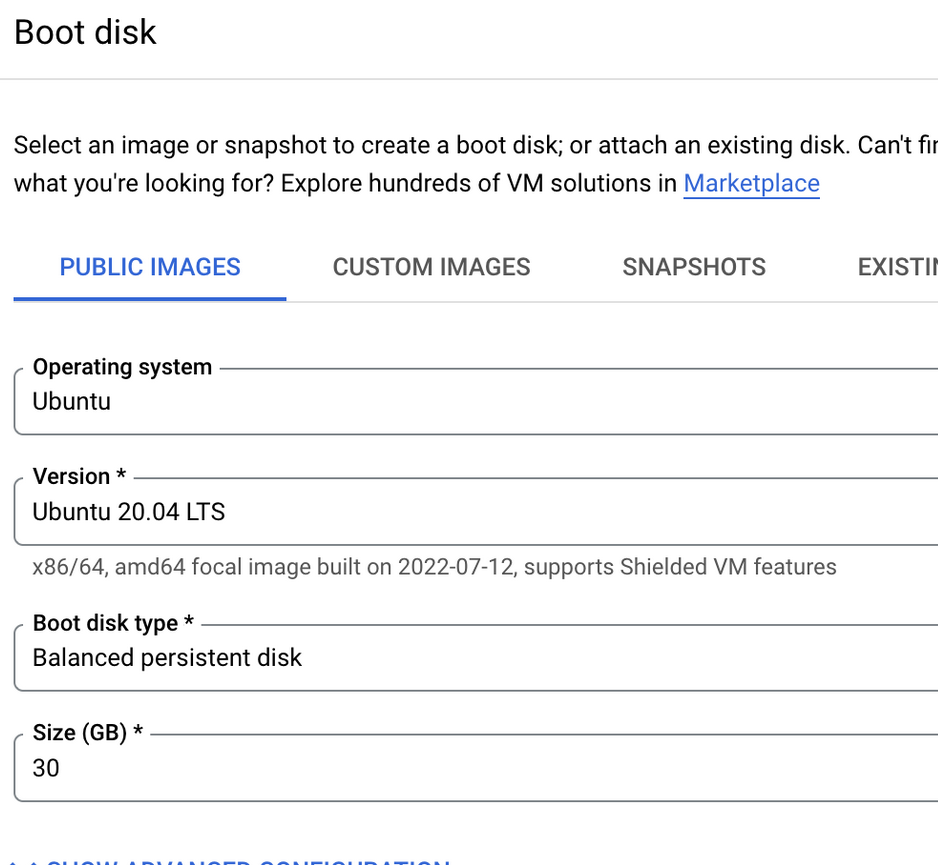

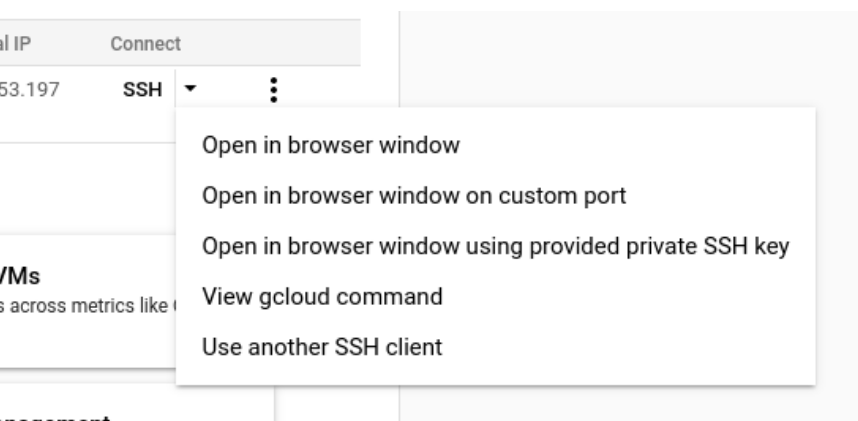

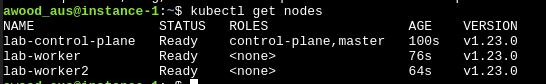

+1. **[Lab Setup](lab-setup.md)** — Environment setup (GCP VM, tooling, kind cluster)

+2. **[Lab Walk-Through](labs_walk_thru.md)** — Step-by-step lab instructions for all modules

+3. **[Cheat Sheet](cheatsheet.md)** — Troubleshooting and quick reference

+

+## What's Covered

+

+| Module | Topic |

+|--------|-------|

+| 1 | Docker fundamentals |

+| 2 | Exploring container images & reverse engineering |

+| 3 | Offensive Docker techniques (exfil, socket hijacking, persistence) |

+| 4 | Container incident response CTF |

+| 5 | Kubernetes 101 |

+| 6 | Kubernetes security — RBAC abuse, priv esc, golden tickets, evil pods |

+| 7 | Supply chain security — cosign, syft, grype, image provenance |

+| 8 | Modern runtime security — Tracee, Falco, Tetragon |

+| 9 | Cloud-native & managed K8s attacks — IMDS, workload identity, network policy bypass |

+

+## Tools Used

+

+- **Infrastructure**: kind, kubectl (v1.31), Helm v3, Ansible

+- **Observability**: Prometheus, Grafana, Loki, Promtail

+- **Runtime Security**: Tracee, Falco (+Falcosidekick), Tetragon

+- **Supply Chain**: cosign, crane, syft, grype

+- **Offensive**: ngrok, openssl, nmap, socat

+

+## Presenters

+

+### Instructor: David Mitchell

+ +

+> [@digish0](https://twitter.com/digish0)\

+> https://digital-shokunin.net

+

+### Instructor: Adrian Wood

+

+> [@whitehacksec](https://twitter.com/WHITEHACKSEC)\

+> https://5stars217.github.io

+

+## Our lockpick/hacker(space) group

+

+

+

+> [@digish0](https://twitter.com/digish0)\

+> https://digital-shokunin.net

+

+### Instructor: Adrian Wood

+

+> [@whitehacksec](https://twitter.com/WHITEHACKSEC)\

+> https://5stars217.github.io

+

+## Our lockpick/hacker(space) group

+

+ diff --git a/current/cheatsheet.md b/current/cheatsheet.md

new file mode 100644

index 0000000..dd5733d

--- /dev/null

+++ b/current/cheatsheet.md

@@ -0,0 +1,213 @@

+# Cheatsheet

+**This is an accompanying file with the lab instructions and commands to help those especially new to linux/docker/kubernetes.**

+

+**If viewing on GitHub, you can navigate using the table of contents button in the top left next to the line count.**

+

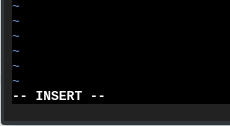

+## Using Vi - useful shortcuts for the lab.

+

+Arrow keys to navigate cursor

+

+`i` to enter insert mode and edit contents.

+When you're in insert mode, you'll see in the bottom left hand corner that this is happening:

+

+

+

+

+`[esc]` to exit insert mode once changes are made.

+

+`u` outside insert mode to undo a change.

+

+`dd` to remove a line outside of insert mode.

+

+`:` to bring up the vi command line outside of insert mode.

+

+`:wq` to save and quit.

+

+`:q!` to exit without changes.

+

+## Troubleshooting - list of error messages and what to do:

+

+**none of the commands work without sudo**

+

+```

+sudo usermod -aG docker $USER

+```

+

+```

+chmod +x kubectl

+sudo mv ./kubectl /usr/local/bin/kubectl

+```

+

+**i'm stuck in my container and i can't control+c exit**

+

+- Open a new terminal window

+- `docker container ls`

+- Find the stuck container

+- `docker stop $containerID` # just the first couple of characters will do, ie `docker stop ac29`

+

+**kubectl commands don't work**

+

+Running a command with kubectl, and you see this:

+"the connection to the server localhost:8080 was refused - did you specify the right host or port?"

+

+Your kubeconfig has been blown away.

+

+```

+kind get kubeconfig --name lab > .kube/config

+```

+

+or

+

+```

+kubectl config use-context kind-lab

+```

+

+**HTTP endpoint (Pipedream) isn't receiving requests**

+

+- Make sure you copied the correct endpoint URL from Pipedream (not the dashboard URL)

+- The URL should look like `https://eo*.m.pipedream.net`

+- Verify the URL is set correctly in your Dockerfile's `ENV URL` line

+

+**`ESC` Keymapping to escape vim in the google console web browser is not working**

+

+Map the escape key to another key combination

+i.e

+`:imap jj :` format in the basic-auth flag above.

+

+## Supply Chain Tools Quick Reference

+

+**cosign** - Image signing and verification

+```

+# Generate key pair

+cosign generate-key-pair

+

+# Sign an image (requires push access to registry)

+cosign sign --key cosign.key

+

+# Verify an image signature

+cosign verify --key cosign.pub

+```

+

+**crane** - Container registry interactions

+```

+# View image manifest

+crane manifest | jq

+

+# List tags

+crane ls

+

+# Get image digest (immutable reference)

+crane digest

+

+# Export image filesystem

+crane export output.tar

+```

+

+**syft** - SBOM generation

+```

+# Generate SBOM for an image

+syft

+

+# Output as SPDX JSON

+syft -o spdx-json

+

+# Output as CycloneDX

+syft -o cyclonedx-json

+```

+

+**grype** - Vulnerability scanning

+```

+# Scan an image

+grype

+

+# Scan with SBOM input

+grype sbom:sbom.json

+

+# Fail on critical vulns (useful in CI)

+grype --fail-on critical

+

+# Only show fixable vulns

+grype --only-fixed

+```

+

+## Runtime Security Tools Quick Reference

+

+**Tracee**

+```

+# Check Tracee pods

+kubectl get pods -n tracee-system

+

+# View Tracee events

+kubectl logs -n tracee-system -l app.kubernetes.io/name=tracee --tail=50

+

+# Run standalone Docker Tracee

+docker run --name tracee -d --rm --pid=host --cgroupns=host --privileged \

+ -v /etc/os-release:/etc/os-release-host:ro \

+ -e LIBBPFGO_OSRELEASE_FILE=/etc/os-release-host \

+ aquasec/tracee:latest

+```

+

+**Falco**

+```

+# Check Falco pods

+kubectl get pods -n falco-system

+

+# View Falco alerts

+kubectl logs -n falco-system -l app.kubernetes.io/name=falco --tail=50

+

+# Access Falcosidekick UI

+kubectl port-forward svc/falco-falcosidekick-ui -n falco-system 2802:2802

+```

+

+**Tetragon**

+```

+# Check Tetragon pods

+kubectl get pods -n tetragon

+

+# View Tetragon events

+kubectl logs -n tetragon -l app.kubernetes.io/name=tetragon -c export-stdout --tail=50

+

+# List TracingPolicies

+kubectl get tracingpolicies

+```

+

+## Grafana Loki Queries

+

+**Tracee events:**

+```

+{namespace="tracee-system"} |= `matchedPolicies` != `sshd` | json | line_format "{{.log}}"

+```

+

+**Falco events:**

+```

+{namespace="falco-system"} | json | line_format "{{.log}}"

+```

+

+**Tetragon events:**

+```

+{namespace="tetragon"} | json | line_format "{{.log}}"

+```

+

+## Version Reference

+

+| Component | Version |

+|-----------|---------|

+| Kubernetes | v1.31.4 |

+| kind | v0.27.0 |

+| Helm | v3.16.4 |

+| kubectl | v1.31.4 |

+| cosign | v2.4.1 |

+| crane | v0.20.2 |

+| syft | v1.18.1 |

+| grype | v0.85.0 |

+| Tracee | 0.24.0 |

diff --git a/current/grafana/tracee-dashboard.json b/current/grafana/tracee-dashboard.json

new file mode 100644

index 0000000..47d2e51

--- /dev/null

+++ b/current/grafana/tracee-dashboard.json

@@ -0,0 +1,172 @@

+{

+ "annotations": {

+ "list": [

+ {

+ "builtIn": 1,

+ "datasource": {

+ "type": "grafana",

+ "uid": "-- Grafana --"

+ },

+ "enable": true,

+ "hide": true,

+ "iconColor": "rgba(0, 211, 255, 1)",

+ "name": "Annotations & Alerts",

+ "type": "dashboard"

+ }

+ ]

+ },

+ "editable": true,

+ "fiscalYearStartMonth": 0,

+ "graphTooltip": 0,

+ "id": 29,

+ "links": [],

+ "liveNow": false,

+ "panels": [

+ {

+ "datasource": {

+ "type": "loki",

+ "uid": "a3fda37a-4998-4161-ae3c-4d44fc0cec38"

+ },

+ "gridPos": {

+ "h": 8,

+ "w": 12,

+ "x": 0,

+ "y": 0

+ },

+ "id": 3,

+ "options": {

+ "dedupStrategy": "none",

+ "enableLogDetails": true,

+ "prettifyLogMessage": false,

+ "showCommonLabels": false,

+ "showLabels": false,

+ "showTime": false,

+ "sortOrder": "Descending",

+ "wrapLogMessage": false

+ },

+ "targets": [

+ {

+ "datasource": {

+ "type": "loki",

+ "uid": "a3fda37a-4998-4161-ae3c-4d44fc0cec38"

+ },

+ "editorMode": "builder",

+ "expr": "{namespace=\"tracee-system\"} |= `matchedPolicies` != `sshd` | json | line_format `\"{{.log}}\"`",

+ "key": "Q-1624ec1f-ad81-496f-81c4-20697b2d94f1-0",

+ "queryType": "range",

+ "refId": "A"

+ }

+ ],

+ "title": "Tracee Events",

+ "type": "logs"

+ },

+ {

+ "datasource": {

+ "type": "loki",

+ "uid": "a3fda37a-4998-4161-ae3c-4d44fc0cec38"

+ },

+ "fieldConfig": {

+ "defaults": {

+ "color": {

+ "mode": "palette-classic"

+ },

+ "custom": {

+ "axisCenteredZero": false,

+ "axisColorMode": "text",

+ "axisLabel": "",

+ "axisPlacement": "auto",

+ "barAlignment": 0,

+ "drawStyle": "line",

+ "fillOpacity": 0,

+ "gradientMode": "none",

+ "hideFrom": {

+ "legend": false,

+ "tooltip": false,

+ "viz": false

+ },

+ "lineInterpolation": "linear",

+ "lineWidth": 1,

+ "pointSize": 5,

+ "scaleDistribution": {

+ "type": "linear"

+ },

+ "showPoints": "auto",

+ "spanNulls": false,

+ "stacking": {

+ "group": "A",

+ "mode": "none"

+ },

+ "thresholdsStyle": {

+ "mode": "off"

+ }

+ },

+ "mappings": [],

+ "thresholds": {

+ "mode": "absolute",

+ "steps": [

+ {

+ "color": "green",

+ "value": null

+ },

+ {

+ "color": "red",

+ "value": 80

+ }

+ ]

+ }

+ },

+ "overrides": []

+ },

+ "gridPos": {

+ "h": 8,

+ "w": 12,

+ "x": 0,

+ "y": 8

+ },

+ "id": 2,

+ "options": {

+ "legend": {

+ "calcs": [],

+ "displayMode": "list",

+ "placement": "bottom",

+ "showLegend": true

+ },

+ "tooltip": {

+ "mode": "single",

+ "sort": "none"

+ }

+ },

+ "targets": [

+ {

+ "datasource": {

+ "type": "loki",

+ "uid": "a3fda37a-4998-4161-ae3c-4d44fc0cec38"

+ },

+ "editorMode": "builder",

+ "expr": "{app=\"tracee\"} | json | __error__=``",

+ "queryType": "range",

+ "refId": "A"

+ }

+ ],

+ "title": "Panel Title",

+ "type": "timeseries"

+ }

+ ],

+ "refresh": "",

+ "schemaVersion": 38,

+ "style": "dark",

+ "tags": [],

+ "templating": {

+ "list": []

+ },

+ "time": {

+ "from": "now-6h",

+ "to": "now"

+ },

+ "timepicker": {},

+ "timezone": "",

+ "title": "Tracee WIP",

+ "uid": "f8cc421a-74b2-4ca4-ba83-201e1955a439",

+ "version": 7,

+ "weekStart": ""

+}

\ No newline at end of file

diff --git a/current/helm-config/grafana-config.yaml b/current/helm-config/grafana-config.yaml

new file mode 100644

index 0000000..17c1ed6

--- /dev/null

+++ b/current/helm-config/grafana-config.yaml

@@ -0,0 +1,15 @@

+prometheus:

+ prometheusSpec:

+ serviceMonitorSelectorNilUsesHelmValues: false

+ serviceMonitorSelector: {}

+ serviceMonitorNamespaceSelector: {}

+

+grafana:

+ sidecar:

+ datasources:

+ defaultDatasourceEnabled: true

+ additionalDataSources:

+ # Loki monolithic mode service name (replaces loki-distributed)

+ - name: Loki

+ type: loki

+ url: http://loki.monitoring:3100

diff --git a/current/helm-config/promtail-config.yaml b/current/helm-config/promtail-config.yaml

new file mode 100644

index 0000000..a3678f9

--- /dev/null

+++ b/current/helm-config/promtail-config.yaml

@@ -0,0 +1,5 @@

+config:

+ serverPort: 8080

+ clients:

+ # Loki monolithic mode endpoint (replaces loki-distributed gateway)

+ - url: http://loki.monitoring:3100/loki/api/v1/push

diff --git a/current/image.png b/current/image.png

new file mode 100644

index 0000000..bd03a54

Binary files /dev/null and b/current/image.png differ

diff --git a/current/k8s-ansible-setup.yml b/current/k8s-ansible-setup.yml

new file mode 100644

index 0000000..38c9005

--- /dev/null

+++ b/current/k8s-ansible-setup.yml

@@ -0,0 +1,170 @@

+---

+- hosts: localhost

+ name: Setup Kubernetes cluster

+ gather_facts: false

+ tasks:

+ - name: Create namespaces

+ ansible.builtin.command: kubectl apply -f k8s-manifests/namespaces.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create ClusterRoles

+ ansible.builtin.command: kubectl apply -f k8s-manifests/clusterroles.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create Roles

+ ansible.builtin.command: kubectl apply -f k8s-manifests/roles.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create Service Accounts

+ ansible.builtin.command: kubectl apply -f k8s-manifests/serviceaccounts.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create ClusterRoleBindings

+ ansible.builtin.command: kubectl apply -f k8s-manifests/clusterrolebindings.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create RoleBindings

+ ansible.builtin.command: kubectl apply -f k8s-manifests/rolebindings.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create Deployments

+ ansible.builtin.command: kubectl apply -f k8s-manifests/deployments.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create Pods

+ ansible.builtin.command: kubectl apply -f k8s-manifests/pods.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ - name: Create Services

+ ansible.builtin.command: kubectl apply -f k8s-manifests/services.yaml

+ register: kubectl_run

+ changed_when:

+ - "'created' in kubectl_run.stdout"

+

+ # --- Prometheus + Grafana (kube-prometheus-stack) ---

+ - name: Install prometheus for kind clusters

+ ansible.builtin.command:

+ cmd: |

+ helm install kind-prometheus prometheus-community/kube-prometheus-stack

+ --namespace monitoring

+ --set prometheus.service.nodePort=30000

+ --set prometheus.service.type=NodePort

+ --set grafana.service.nodePort=31000

+ --set grafana.service.type=NodePort

+ --set grafana.adminPassword=prom-operator

+ --set alertmanager.service.nodePort=32000

+ --set alertmanager.service.type=NodePort

+ --set prometheus-node-exporter.service.nodePort=32001

+ --set prometheus-node-exporter.service.type=NodePort

+ --values helm-config/grafana-config.yaml

+ register: helm_install

+ changed_when:

+ - "'STATUS: deployed' in helm_install.stdout"

+

+ # --- Promtail (log shipping to Loki) ---

+ - name: Install promtail

+ ansible.builtin.command:

+ cmd: |

+ helm upgrade

+ --install promtail grafana/promtail

+ --values helm-config/promtail-config.yaml

+ --namespace monitoring

+ register: helm_install

+ changed_when:

+ - "'STATUS: deployed' in helm_install.stdout"

+

+ # --- Loki (monolithic mode - replaces deprecated loki-distributed) ---

+ - name: Install loki (monolithic mode)

+ ansible.builtin.command:

+ cmd: |

+ helm upgrade

+ --install loki grafana/loki

+ --namespace monitoring