Problem: with certain real world data (in a typical model selection workflow) model predictions end up Inf.

This seems to be the result of high collinearity, unstable parameter estimates.

Demonstration:

library(MIAmaxent)

packageVersion("MIAmaxent")

#> [1] '1.2.0.9000'

reprexdata <- structure(list(RV = c(1, 1, 1, NA, NA, NA, NA, NA, NA, NA),

EV_L = c(0.98, 0.83, 0.94, 0.72, 0.89, 0.7, 0.67, 0.64, 0.39, 0.83),

EV_D2 = c(0, 0.03, 0, 0.07, 0.01, 0.09, 0.1, 0.13, 0.37, 0.03),

EV_M = c(0.96, 0.74, 0.9, 0.6, 0.83, 0.58, 0.54, 0.5, 0.26, 0.74)),

class = "data.frame", row.names = c("1", "2", "3", "4", "5", "6", "7", "8", "9", "10"))

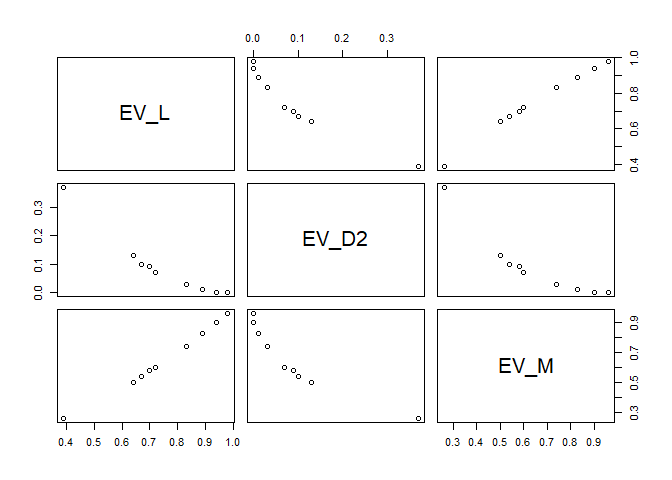

plot(reprexdata[,-1])

cor(reprexdata[,-1], reprexdata[,-1])

#> EV_L EV_D2 EV_M

#> EV_L 1.0000000 -0.9451377 0.9946556

#> EV_D2 -0.9451377 1.0000000 -0.9069347

#> EV_M 0.9946556 -0.9069347 1.0000000

iwlr <- MIAmaxent:::.runIWLR(formula("RV ~ EV_L + EV_D2 + EV_M"), reprexdata)

coef(iwlr)

#> (Intercept) EV_L EV_D2 EV_M

#> -2531.4654 7431.9418 802.6664 -4954.6279

iwlr$entropy

#> [1] 0

iwlr$alpha

#> [1] -InfThe instability is contingent on the data in unexpected ways. Leave only the highly correlated variables and the problem disappears:

iwlr <- MIAmaxent:::.runIWLR(formula("RV ~ EV_L + EV_M"), reprexdata)

coef(iwlr)

#> (Intercept) EV_L EV_M

#> -262.0798 782.4296 -530.8548

iwlr$entropy

#> [1] 1.41998

iwlr$alpha

#> [1] -258.5736This problem can appear in a real world MIAmaxent workflow, as highly correlated derived variables are selected together given a sufficient number of background points:

set.seed(42)

longerdata <- dplyr::slice_sample(reprexdata, n = 1e4, replace = TRUE)

selection <- selectDVforEV(list(RV = longerdata$RV, EV = longerdata[,-1]))

#> Forward selection of DVs for 1 EVs

#> | | | 0% | |======================================================================| 100%

selection$selection

#> $EV

#> round variables m Dsq Chisq df P

#> 1 1 EV_D2 1 0.099 4033.427 1 0.00e+00

#> 2 1 EV_L 1 0.097 3945.131 1 0.00e+00

#> 3 1 EV_M 1 0.094 3834.647 1 0.00e+00

#> 4 2 EV_D2 + EV_L 2 0.100 26.073 1 3.29e-07

#> 5 2 EV_D2 + EV_M 2 0.099 9.107 1 2.55e-03

#> 6 3 EV_D2 + EV_L + EV_M 3 0.140 1660.015 1 0.00e+00

I have only encountered the issue when both 'L' and 'M'-type transformations are used. The derived variables that result from these may often be highly correlated. So I expect that picking only one of these transformation types in deriveVars() will resolve the problem in most real world cases.

Created on 2022-11-22 by the reprex package (v2.0.1)

Problem: with certain real world data (in a typical model selection workflow) model predictions end up Inf.

This seems to be the result of high collinearity, unstable parameter estimates.

Demonstration:

The instability is contingent on the data in unexpected ways. Leave only the highly correlated variables and the problem disappears:

This problem can appear in a real world MIAmaxent workflow, as highly correlated derived variables are selected together given a sufficient number of background points:

I have only encountered the issue when both 'L' and 'M'-type transformations are used. The derived variables that result from these may often be highly correlated. So I expect that picking only one of these transformation types in deriveVars() will resolve the problem in most real world cases.

Created on 2022-11-22 by the reprex package (v2.0.1)