Welcome to this repo! Here I have used Google's Mediapipe Hands to create a machine learning module for a hand tracking game controller that interprets the location of your hand in the camera into the controls: up, down, left, right. And it all happens on the CPU!

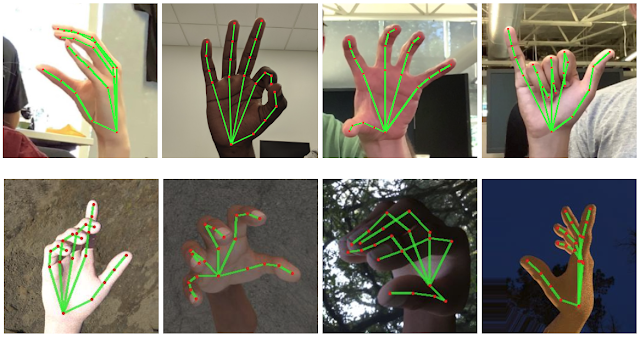

Traditionally to train your own hand tracking model you would need as many samples of hands as you can get (with a variety on backgrounds, skintones, lighting, ect) to push through your ML pipeline with time and GPU.

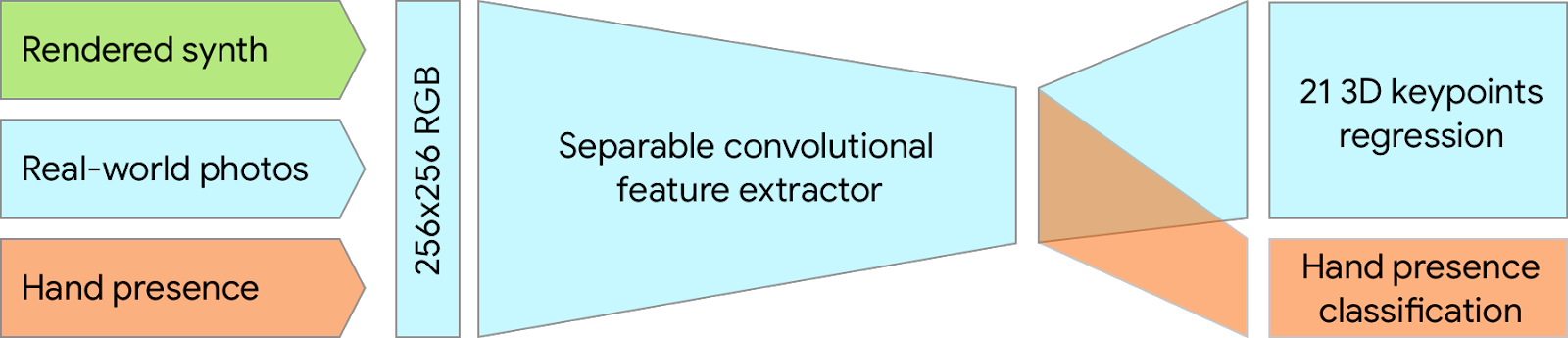

However, MediaPipe has done the heavy lifting here to allow this game controller to function in on-device and in real time. Essentially using this architecture:

To place 20 3-D hand landmarks (very accuratly) (average precision of 95.7%)

read wayyyy more detailed description here: https://www.arxiv-vanity.com/papers/2006.10214/

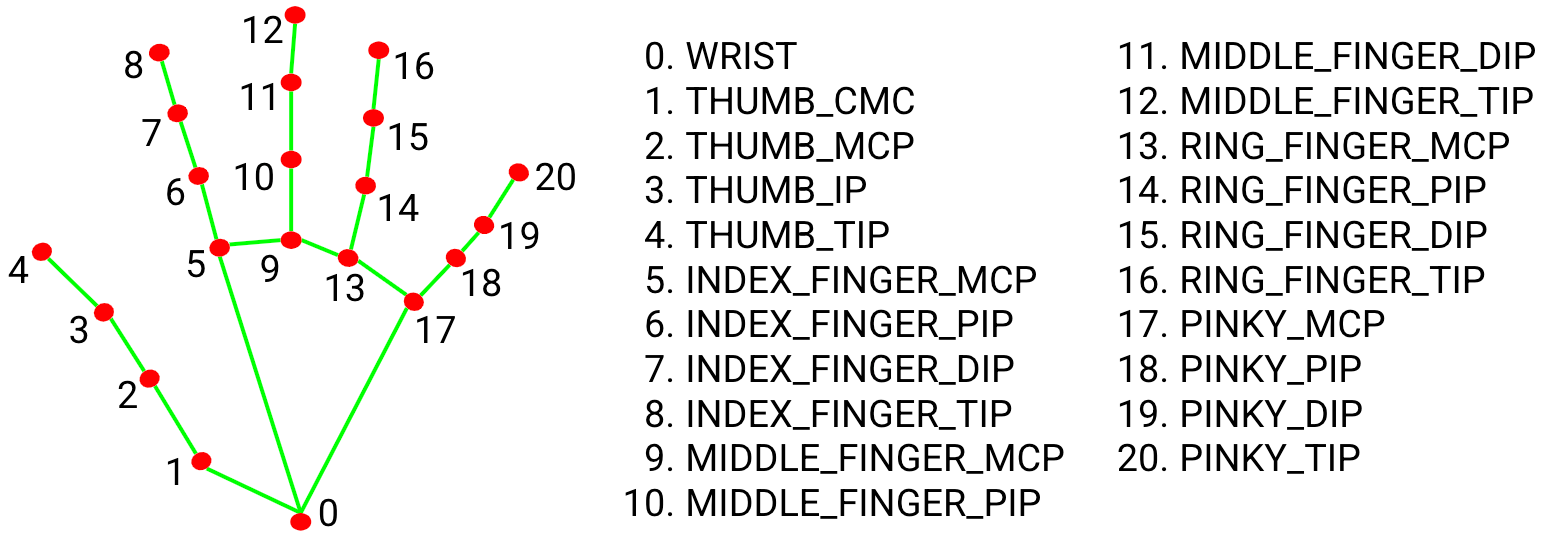

Each of the 20 3D Landmarks:

output their corrdinates in relation to landmark 0 at a speed of about 13/FPS once a hand is detected

My goal was to focus on using the coordinates in reference to the 2-D plane they are on rather than the distance each corrdinate is from the other (the typical use for MediaPipe Hands that allows for projects like sign language interpretation).

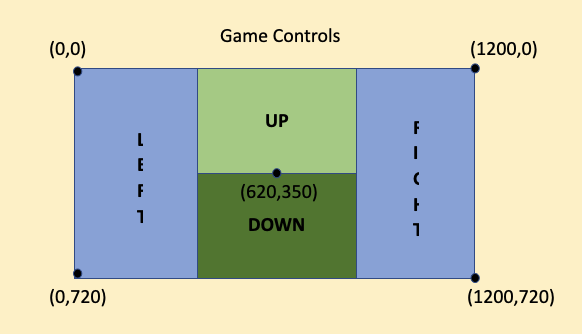

Instead of the 20 landmarks, I only took landmark 0 (at the base of the hand). This module tracks the base of the hand in relation to the user's camera frame on a plane of about 1200x720 to develop the controls: UP, DOWN, LEFT, RIGHT.

watch me demo it here: https://youtu.be/upNaenRRoZs

hints:

- keep maxNumHands = 1 to avoid confusion (however you may switch between hands while it is running)

- SCOOT BACK in your camera for more accuracy

- remember the base of your palm is what is being tracked (landmark 0)